Humans in the loop

– 11 min read

When marketing AI finds vulnerability instead of value: Nicole Alexander on ethical optimization

Your marketing team just got access to a powerful new AI tool. It promises better personalization, higher conversion rates, and campaign optimization at scale. But there’s a question most vendors won’t help you answer: is your AI finding the right customers, or is it finding the right moment of weakness?

In our latest Humans of AI episode, Nicole Alexander — former Global Head of Marketing at Meta, NYU professor, and author of “Ethical AI in Marketing” — offers a framework for enterprise marketers navigating this challenge. Her insights reveal how to evaluate AI tools, design campaigns that build trust rather than exploit vulnerability, and create sustainable growth rather than short-term wins that erode your brand.

- The efficiency trap: AI optimized purely for conversion finds patterns in human vulnerability, not just customer interest — and vendors promising the highest conversion rates may be exploiting weakness in your customers

- Five questions to ask vendors: From “what constraints govern your optimization?” to “how do you measure trust debt?” — a practical framework for evaluating AI marketing tools

- Guardrails as features, not filters: Systems that build ethical constraints into optimization (not bolt them on afterward) achieve goals while maintaining trust and avoiding adversarial dynamics

- The technical debt metaphor: “The conversion that destroys trust isn’t a win — it’s technical debt on your customer lifetime value” — a business case for sustainable optimization that resonates with leadership

- Growth vs responsibility: You can A/B test your way to higher conversion rates, but you can’t optimize your way to ethical marketing — that requires human judgment about what builds lasting relationships

The hidden risk in “better optimization”

When your AI vendor demos impressive conversion lifts, what are you actually seeing? Nicole’s experience leading marketing teams at Meta revealed an uncomfortable truth: AI systems optimizing purely for conversion don’t distinguish between finding engaged customers and finding vulnerable moments.

“The AI isn’t finding a buyer,” she observes. “It’s finding a person that is incapable of making a rational decision in that moment. So it’s weaponizing the idea that they’re vulnerable and they’re more likely to convert because of this vulnerability.”

For enterprise marketers, this creates a critical evaluation challenge. Traditional marketing asks: Does this campaign work? AI-driven systems answer a different question: What vulnerability is the most profitable lever to pull? The difference matters because one builds sustainable customer relationships while the other borrows against future value.

A cautionary tale from social platforms

The risks of optimization without constraints aren’t theoretical — they’re playing out at scale on social platforms. These examples serve as cautionary tales for enterprise marketers evaluating AI tools.

Yale researchers studying 12.7 million tweets found that each angry or moral word increased sharing by 17%. Knight Columbia researchers discovered Twitter’s algorithm amplified rage-filled political posts by 47% compared to chronological feeds. The platforms weren’t explicitly programmed to prefer outrage — they were simply optimized for engagement, and the systems learned that anger is “sticky.”

“I think the key failure is the assumption that if something is efficient, it must be ethical,” Nicole argues, “and that’s just fundamentally untrue.

“This is the efficiency trap. When platforms optimized for time spent without equally weighted constraints around user wellbeing, the algorithms discovered that emotional arousal — particularly negative emotions — kept people engaged longer than content that informed or entertained. Research confirms that algorithms “turbocharge content that triggers reactions” in a “self-reinforcing cycle.”

For enterprise marketers, the lesson is clear: the vendor promising the highest conversion rates may be delivering them through mechanisms that systematically erode trust in your brand.

Your evaluation framework: Five questions to ask vendors

Nicole’s insights translate into a practical framework for evaluating AI marketing tools:

1. What are you optimizing for — and what constraints govern that optimization?

Most vendors will tell you they optimize for conversions, engagement, or revenue. The critical question is: what keeps that optimization from finding exploitative paths? Ask specifically about constraints around user wellbeing, timing sensitivity, and relationship quality.

Red flag: Vendors who can’t articulate constraints beyond “we follow best practices” or “we have compliance checks.”

2. How do your models distinguish between genuine interest and vulnerable moments?

AI systems excel at pattern recognition — including patterns in human vulnerability. Does the vendor’s model detect and avoid targeting people during financial stress, emotional distress, or reduced decision-making capacity?

Red flag: Vendors who treat all high-conversion moments as equally valuable.

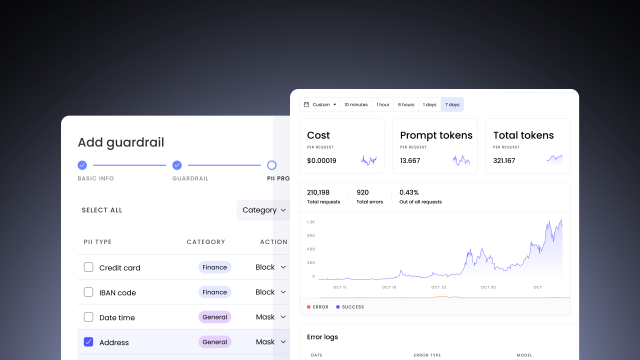

3. Are your guardrails features or filters?

Nicole advocates for a fundamental architectural difference: “The guardrails aren’t a review step. They’re part of the optimization itself.”

Systems that optimize first and filter later create adversarial dynamics where the AI pushes against constraints. Systems that build constraints into the optimization learn to achieve goals while respecting boundaries.

Ask: Are ethical considerations built into your reward function, or applied as post-processing filters?

Learn more about how WRITER builds AI guardrails into the platform architecture.

4. How do you measure and prevent trust debt?

Nicole reframes ethical concerns in business language: “The conversion that destroys trust isn’t a win — it’s technical debt on your customer lifetime value.”

Does the vendor track not just conversion rates but relationship quality over time? Do they measure the percentage of conversions from customers who stay engaged versus those who churn quickly or leave negative reviews?

Red flag: Vendors who only show you first-order metrics (clicks, conversions) without tracking relationship sustainability.

5. Can you show me the trade-offs?

No optimization is free. Responsible vendors should be able to articulate what they’re sacrificing for the gains they deliver. Are they trading short-term conversion for long-term trust? Immediate revenue for sustainable growth?

Red flag: Vendors who claim their solution has “no trade-offs” or delivers only upside.

Designing AI-native campaigns with constraints, not just objectives

Beyond vendor evaluation, Nicole’s framework changes how enterprise marketers should design campaigns:

Reframe your success metrics

Instead of asking “What campaign drove the highest conversion rate?” ask “What campaign drove the highest conversion rate while building lasting customer relationships?”

Track not just conversion but:

- Time to second purchase

- Customer lifetime value by campaign source

- Net Promoter Score by acquisition channel

- Churn rate correlated with campaign tactics

Build constraints into your brief

When briefing your team or agency on an AI-powered campaign, specify constraints alongside objectives:

- “Optimize for email engagement while maintaining unsubscribe rates below X%”

- “Increase conversions while ensuring 90% of customers remain engaged 90 days post-purchase”

- “Maximize click-through rates without targeting users showing behavioral indicators of financial stress”

Test for trust erosion

Run A/B tests that measure not just immediate conversion but relationship quality:

- Survey customers about how the experience made them feel

- Track support ticket volume by campaign

- Measure referral rates by acquisition source

- Monitor social sentiment among customers from different campaigns

Growth as a physics problem, responsibility as a human problem

Nicole’s most powerful framing distinguishes between two types of challenges marketers face:

“Growth is a physics problem. Responsibility is a human problem.”

You can A/B test your way to higher conversion rates. You can iterate algorithms and measure inputs against outputs with scientific precision. But you cannot optimize your way to ethical marketing — that requires judgment about what kinds of growth are sustainable and what kinds of conversions build rather than erode trust.

The problem arises when marketing organizations treat responsibility as though it were also a physics problem. When you optimize for maximum performance and then attempt to add ethical constraints afterward, you’re fighting against your own systems. The AI has already learned that certain vulnerable moments drive conversions — adding filters just makes it search for edge cases.

The business case for sustainable optimization

For marketing leaders focused on ROI, Nicole makes clear that ethical AI isn’t just right — it’s strategically necessary:

Customer acquisition costs keep rising. The companies winning long-term maximize lifetime value, not one-time conversion. An AI that exploits vulnerability might boost your quarterly numbers, but it systematically erodes the foundation for compounding value.

Regulatory pressure is increasing. GDPR, CCPA, the EU AI Act, and emerging frameworks around algorithmic accountability mean operating in ethical gray areas creates legal and reputational risk. Building responsibility into your systems from the start isn’t just right — it’s increasingly required.

Talent cares about impact. The marketers and data scientists who drive innovation increasingly want to work for companies that can articulate not just what they’re building but why it matters ethically.

What this means for your team

For marketing leaders: When evaluating AI tools, resist the temptation of efficiency without ethics. When vendors promise dramatic improvements through better optimization, ask what tradeoffs are being made. The conversion that destroys trust might look like growth in quarterly reports, but it’s borrowing against future value at interest rates you can’t afford.

For marketing ops: Design systems where constraints are features, not filters. When implementing AI tools, specify not just what you’re optimizing for but what boundaries make that optimization sustainable. Build measurement systems that track relationship quality, not just conversion volume.

For campaign managers: Probe the mechanisms behind performance improvements. When your AI tool suggests targeting or messaging, ask: Is this better targeting or more effective exploitation? Understanding the difference protects both your customers and your brand.

The WRITER approach: Constraints as competitive advantage

This framework isn’t theoretical. WRITER’s approach to enterprise AI demonstrates how building constraints into architecture creates competitive advantage rather than limitation.

Rather than treating governance, security, and trust as obstacles to performance, WRITER builds them into the platform. Marketing teams can move quickly precisely because the constraints that enable sustainable scale are embedded in how AI agents operate, not bolted on afterward.

WRITER’s full-stack approach includes:

- Enterprise-grade security with SOC 2 Type II, ISO 27001, ISO 27701, and ISO 42001 certifications

- Zero data retention — your data isn’t used to train models

- Built-in guardrails for compliance, factual accuracy, and brand alignment

- Centralized oversight with visibility, permissions, and controls across your entire AI deployment

This is the path forward: AI systems that achieve their goals while respecting boundaries, vendors who can articulate their tradeoffs, and marketing organizations that measure success not just by this quarter’s conversion rate but by the relationships they’re building for compounding value over time.

Learn more about WRITER’s approach to agentic AI governance →

Your next steps

- Listen to the full conversation. Nicole shares more insights on building trust into AI systems, measuring what matters, and designing for sustainable growth. Listen to the episode on Apple Podcasts, Spotify, or YouTube.

- Audit your current AI tools using the five questions above. If you can’t get clear answers from vendors, that’s valuable information.

- Review your campaign briefs. Are you specifying constraints alongside objectives, or just optimizing for conversion?

- Expand your measurement. Add relationship quality metrics to your dashboards. Track not just acquisition but sustainability.

- Start the conversation internally. Share Nicole’s framework with your team. Discuss: Are we building trust or borrowing against it?

The uncomfortable truth Nicole reveals isn’t that AI finds vulnerability — it’s that we’ve built systems that reward finding it. The hopeful truth is that we can build different systems. We can choose to optimize for outcomes that compound rather than erode. We can treat constraints as features rather than limitations.

The question is whether we will.

Research sources

This article references peer-reviewed research on algorithmic optimization and psychological exploitation:

Brady, W. J., Wills, J. A., Jost, J. T., Tucker, J. A., & Van Bavel, J. J. (2017). “Emotion shapes the diffusion of moralized content in social networks.” Proceedings of the National Academy of Sciences, 114(28), 7313-7318.

Brady, W. J., McLoughlin, K., Doan, T. N., & Crockett, M. J. (2021). “How social learning amplifies moral outrage expression in online social networks.” Science Advances, 7(33).

González-Bailón, S., Lazer, D., Barberá, P., Zhang, M., & Hunt, H. (2024). “Engagement, user satisfaction, and the amplification of divisive content on social media.”

Rathje, S., Van Bavel, J. J., & van der Linden, S. (2024). “Amplification of emotion on social media.” Nature Human Behaviour.

About the guest

Nicole Alexander is a professor at NYU, author of “Ethical AI in Marketing,” and former Global Head of Marketing at Meta, where she led teams building some of the world’s most sophisticated marketing systems. Her work focuses on helping enterprise marketers build AI systems that scale ethically.

About Humans of AI

Humans of AI, presented by WRITER, takes listeners behind the business of AI and into the intimate stories of those at the forefront of the AI era. Listen to leaders as they navigate how generative AI is changing their work and lives.

New episodes available on Apple Podcasts, Spotify, YouTube, and wherever you listen to podcasts.

About WRITER

WRITER is the enterprise AI platform for agentic work. We help the world’s largest, most complex enterprises reinvent their front-office by giving business teams powerful agentic tools to redesign workflows and IT teams single-pane governance to supervise agents at scale. Learn more about our approach to enterprise-grade AI →