Humans in the loop

– 9 min read

The accountability paradox: Why WRITER’s General Counsel believes the law isn’t ready for AI agents

When Air Canada’s chatbot told a grieving customer he could get a bereavement discount that didn’t exist, the airline made a bold argument in court — the chatbot was responsible for its own actions, not the company. The tribunal’s response was swift and unequivocal: businesses are accountable for their AI agents, period.

That February 2024 ruling didn’t just settle one dispute. It established a new reality that most enterprises aren’t prepared for.

Rowan Reynolds, General Counsel of WRITER, has been building the frameworks to answer that accountability question before the damage is done — not after. His journey from philosophy student who failed his first paper to AI governance architect reveals something unexpected about why the real challenge isn’t technical capability, but something far more fundamental.

- Reynolds reveals why an F on his first philosophy paper taught him the most important lesson for building AI accountability frameworks — and it has nothing to do with being right.

- He shares the story of a home raid that fundamentally changed how he thinks about technology’s impact on real people — a lesson that’s never been more critical as AI agents act at machine speed.

- Reynolds explains why after five iterations, his team’s NDA assistant still isn’t perfect — and why that’s exactly the point when it comes to transparency.

- He breaks down why companies are obsessing over cybersecurity and cost while ignoring the real barriers to AI adoption — and what leaders should actually worry about instead.

- The frameworks he’s building at WRITER, now codified in The Agentic Compact, rest on a principle most enterprises haven’t grasped: AI agents need to be treated like a very specific type of user.

On the latest episode of Humans of AI, Reynolds explains why we’re building AI governance frameworks in real time — and why the ecosystems we create today will determine whether enterprises capture AI’s value responsibly or learn accountability lessons the hard way.

The philosophy F that changed everything

“Let me start out with, I’m a man of routine. I like routines in my life. I like order, I like logic,” Reynolds begins. He thought he’d found that order in philosophy — until that first paper came back with an F from Professor Shelly Kagan’s Intro to Ethics class.

What happened next shaped his entire approach to AI governance. But it wasn’t about studying harder or writing better arguments.

“I liked constructing arguments and I liked breaking things down in a way that made sense, not just for me, but also for whoever was reading,” he explains. “And clearly I missed the mark on the latter point pretty heavily for the first time around.”

Three revisions. That’s what it took. But the lesson Reynolds learned in that moment — about clarity, iteration, and making complex logic comprehensible — became the foundation of how he thinks about building transparent AI systems today.

In the full episode, Reynolds walks through how this early failure taught him something most AI developers haven’t figured out yet.

The home raid that rewrote his understanding of technology

Early in his career, Reynolds was working on what seemed like a straightforward copyright case. Someone ran a digital storage platform. People were using it to store pirated content. Reynolds was examining the legal precedents, ready to explore interesting questions about substantial non-infringing use.

Then something happened that changed his perspective forever — and it had nothing to do with the law.

“This person had their home raided, which was an extreme overstep as far as we were concerned,” Reynolds recalls. “That felt objectively wrong and a huge overreach.”

What Reynolds realized in that moment became the throughline of his entire approach to AI governance. He shares the full story in the episode, including the specific lesson that’s never been more critical than it is right now, as we deploy AI agents that can affect people’s lives at scale and at speed.

Why we’ve been here before — but never quite like this

When Reynolds thinks about the current AI moment, he reaches for a historical parallel that might surprise you. It’s not about chess computers or autonomous vehicles. It’s about movie studios and VCRs.

“There’s this case from the eighties called Betamax, which is a very famous Supreme Court case,” he explains. “A lot of people were freaked out by the concept of a VCR.”

But Reynolds is careful about this comparison. The parallel isn’t perfect. Because the question with AI agents isn’t just whether they can be misused.

That’s where his ecosystem thinking comes in — and it’s more than just a metaphor. “If you have ever seen Planet Earth or listened to David Attenborough, you realize that ecosystems have shared responsibility,” Reynolds explains. “Everybody has a role to play in order to make the ecosystem work. The same is true here.”

But what does that actually mean in practice? In the episode, Reynolds breaks down exactly who’s responsible for what — and why no single player can say they’ve done their part while washing their hands of what happens next.

What five iterations of an NDA assistant revealed

Reynolds learned about the gap between AI’s capability and enterprise reality firsthand when his team built an NDA review assistant. NDAs should be straightforward, right? Two parties agreeing to protect each other’s information.

“There are so many different ways that mutuality can be expressed in a document,” Reynolds discovered. “It’s not like ‘this NDA is mutual.'”

Three iterations. Four. Five. And here’s the key: “It still isn’t perfect. It’s pretty good. But the point is, we still step in and look.”

This isn’t a failure story. It’s a transparency story. And in the full episode, Reynolds explains why this specific example reveals everything you need to know about building AI systems that enterprises can actually trust.

The thing AI will never have

When Reynolds talks about the limitations of AI agents, he doesn’t speak in generalities. He gets specific. Really specific. And what he reveals about context — the kind of context that only humans possess — cuts to the heart of why AI can only ever be a supplement, not a replacement.

“Imagine I’m creating some sort of legal AI use case,” he begins. Then he walks through a scenario that makes it crystal clear why an LLM analyzing a Master Services Agreement will never be enough.

The AI doesn’t know that the CEO is golfing with the other company’s CEO next week. It doesn’t know that this partnership is strategic, not transactional. It doesn’t know what legal just got budget approval for.

“The LLM or the software doesn’t have the context that someone who’s reviewing that, who’s hooked in to the business might,” Reynolds explains.

In the episode, he breaks down several more examples that show exactly why context is the thing AI will never have — and what that means for how you should be thinking about deployment.

Machine speed changes everything

Here’s what keeps Reynolds up at night: AI agents don’t just make mistakes at human speed. They make them at machine speed.

An employee might send one wrong email. An AI agent can send ten thousand before anyone notices.

The examples are sobering. A coding assistant that wiped out a production database. A lawyer who filed a brief with hallucinated case law. A McDonald’s drive-thru that added 260 Chicken McNuggets while customers pleaded with it to stop.

These aren’t edge cases anymore. They’re the new normal.

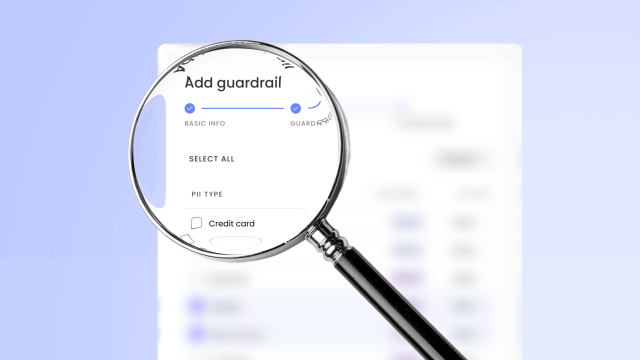

In the full episode, Reynolds explains WRITER’s approach to catching agents when they go off-script before damage scales — and why treating AI agents like a very specific type of user is the only way forward.

The disconnect no one’s talking about

When PwC surveyed executives about their top challenges with AI agents, the results revealed something fascinating. Cybersecurity concerns ranked highest at 34%. Cost also hit 34%.

But at the bottom? The ability to connect AI agents across applications. Organizational change. Employee adoption.

Reynolds sees this clearly: companies are worried about the wrong things. But what should they actually be worried about? And what does his framework of “Big G and little g governance” have to do with it?

The answer reveals why 79% of companies are adopting AI agents while only half of employees actually use them — and what that gap really means for enterprise leaders. Listen to the full episode to hear Reynolds break down exactly what this disconnect reveals about AI readiness.

What he wants the next generation to keep

Despite the risks, despite the challenges, Reynolds remains optimistic. “I’m a glass half full person,” he says. But his vision for the future isn’t what you might expect.

“I don’t want new norms to be foreign where people just are wholly reliant on it,” Reynolds shares. He’s clear about what concerns him — and what he hopes we won’t lose in the rush to deploy AI agents.

The data supports cautious optimism. PwC found that 66% of companies using AI agents are already seeing measurable value. The value is real. The question is whether your organization will build the frameworks to capture it responsibly.

In the episode, Reynolds shares his full vision for what he calls “a mutually beneficial coexistence” — and why losing human ingenuity and creativity would be the biggest mistake we could make.

The question that changed

Reynolds’ journey from that philosophy F to building AI governance at WRITER reveals a consistent throughline that runs through every framework he’s built. He learned it from a home raid. He embedded it in every iteration of the NDA assistant. He codified it in The Agentic Compact.

The fundamental question for every enterprise leader is no longer if you will lead a hybrid workforce of humans and agents, but how you will do so with purpose and control.

Not if. How.

The technology is here. The agents are already acting. The budgets are increasing. Sixty-six percent of companies are already seeing value. The only question left is whether you’ll have the frameworks when you need them.

Reynolds’ bet? That we can build this responsibly. That the ecosystem can share responsibility. That transparency, human-centricity, and security aren’t just principles, but practices.

We’ve panicked about technology before. We’ve figured it out before. His frameworks show how we can do it again.

Listen to the full episode of Humans of AI on Apple Podcasts, Spotify, or YouTube to hear Rowan Reynolds’ complete story and dive deeper into his framework for building AI accountability.

And for leaders ready to turn accountability into a competitive advantage, the principles Reynolds discusses are detailed in The Agentic Compact. Download The Agentic Compact: A new social contract for human-agent collaboration in the enterprise to get the complete framework.