Evaluating

agentic AI solutions for the enterprise

The CIO’s complete guide

You’re at an inflection point — and the window is closing

Your board expects AI transformation. Your business units are already building shadow agents. Your security team is raising red flags. And you’re being asked to make a platform decision that will define your company’s competitive position for the next decade.

The pressure is real: 88% of senior executives plan to increase AI-related budgets in the next 12 months due to agentic AI (PwC AI Agent Survey, May 2025). But here’s what keeps you up at night — the enterprise agentic AI market is still immature. Vendors are rebranding workflow automation as “agentic.” Point solutions promise quick wins but create long-term technical debt. And DIY approaches hide crushing costs that only emerge at scale.

The stakes are higher than your first generative AI pilots. This isn’t about productivity tools anymore. This is about re-architecting core business processes with autonomous agents that make decisions, take actions, and generate measurable business value across three critical dimensions:

- Accelerated revenue: AI agents that autonomously run complex commercial workflows—from market research to personalized client outreach—that directly boost your top line

- Intelligent cost reduction: Agents taking full ownership of entire processes, fundamentally re-architecting your cost structures across operations, IT, and the business

- Systemic risk mitigation: A governed agentic framework that embeds compliance, brand consistency, and operational resilience directly into your core processes

But here’s what most enterprises get wrong: They’re forced to compromise. You can have developer tools that are powerful but siloed from the business users who hold the context. Or business tools that are accessible but shallow. You can streamline your stack in one ecosystem but get locked into one vendor’s roadmap. Or manage the complexity of stitching everything together yourself.

WRITER changes that calculus. We’re the only platform purpose-built for enterprises that delivers all three pillars with no tradeoffs:

- Business Empowerment: The people closest to the work design and maintain agents—encoding institutional knowledge that makes AI actually work

- IT Governance: Complete control over the environment every agent runs in—with full interoperability across your existing tech stack

- Industry Expertise: Proven domain-specific solutions and embedded specialists that accelerate time-to-value from months to weeks

The fundamental question has shifted. You’re no longer asking “What can this tool do for my organization?” You’re asking: ”How do we re-engineer—and actually govern—our business processes with AI automation at enterprise scale?”

This guide gives you a framework to cut through the noise and make a decision you can defend to your board, your CISO, and your CFO.

What you’ll learn:

- The three pain points derailing enterprise agentic AI initiatives—and why a patchwork approach fails

- How the AI technology stack is evolving—from models to orchestrated multi-agent systems

- A strategic evaluation framework—with competitive contrast and questions that separate real platforms from point solutions

- What successful enterprise adoption actually looks like — including implementation timelines and ROI metrics

- How to move from evaluation to decision — with answers to the toughest objections you’ll face internally

Download the ebook

Executive summary

This guide provides CIOs with a comprehensive framework for evaluating enterprise agentic AI platforms — the platform decision that will define your organization’s competitive position for the next decade.

What you’ll gain:

- A strategic lens for distinguishing true platforms from rebranded point solutions and workflow automation tools

- Five critical evaluation criteria — model strategy, security architecture, orchestration capabilities, business enablement, and total cost of ownership — with vendor questions that reveal architectural reality

- Real-world implementation roadmaps showing how enterprises achieve 333% ROI with <6 month payback (Forrester TEI, April 2025)

- Answers to the six toughest internal objections: DIY vs. buy, Microsoft/Google alternatives, vendor lock-in, AI sprawl, shadow AI prevention, and regulatory uncertainty

- A decision framework that helps you move from analysis paralysis to confident action — balancing Board concerns, CFO ROI requirements, and CISO security standards

The bottom line:

The enterprise agentic AI market is still maturing, but the window for strategic advantage is closing. Organizations that successfully navigate the “Crawl, Walk, Run, Fly” maturity curve will re-architect core operations and scale without proportional headcount growth. Those that delay six months for “more data” will find themselves catching up to competitors who moved decisively. This guide gives you the framework to choose wisely, execute strategically, and lead the transformation.

What are the top three agentic AI pain points for CIOs?

KEY TAKEAWAYS

- The true financial drain of a DIY agentic framework is the massive, hidden cost of the infrastructure required to make it run reliably at scale

- Decentralized AI agents inevitably create a “Wild West” environment, exposing your business to serious security vulnerabilities and operational chaos

- Allowing powerful AI agents to work without coordination builds a complex new form of technical debt that undermines their value

Pain point #1

Spiraling AI costs and hidden fees

It’s tempting to think that stitching together best-of-breed AI models and tools gives you ultimate control and cost efficiency. But the sticker price of an AI model is just the tip of the iceberg. The real expense lies in the massive, ongoing effort to build and support the infrastructure that allows agents to function reliably at an enterprise scale.

The integration tax

Consider what happens when you try to connect agents to your existing apps and data. Each integration becomes a constant, expensive engineering nightmare. Every new connection is a fragile point of failure. Each API update requires maintenance. Each security patch demands cross-system testing.

Most enterprises underestimate these integration costs by 3-5x in their initial business case.

The performance challenge

A slow chatbot is an annoyance. A slow agent fumbling a time-sensitive financial transaction is a disaster. Achieving the latency-optimized inference needed for agents to perform complex tasks instantly requires infrastructure that’s incredibly difficult and expensive to build from scratch.

Production-grade agent performance demands specialized infrastructure that point solutions don’t provide — and most IT teams don’t have the expertise to build.

The talent gap

The engineers who can build, deploy, and govern autonomous agents represent a new breed of specialist. They need to understand LLM ops, orchestration frameworks, enterprise integration patterns, and AI security. They are rare, in high demand, and expensive.

The “build it ourselves” path typically requires hiring 5-8 specialized FTEs at $200K+ each, plus ongoing retention costs in a hyper-competitive talent market.

Beyond ‘build vs. buy’: Why a unified AI platform is the only way to scale agentic AI

Learn more

Pain point #2

Ungoverned AI and mounting security risks

Your biggest risk isn’t an employee pasting a confidential document into a public AI tool. It’s that same employee, with the best of intentions, using a no-code app to build a makeshift AI agent that starts moving customer data between systems autonomously—completely invisible to your IT and security teams.

This is the “shadow operations” problem: autonomous processes running outside your governance framework, creating compliance exposure you can’t even see, let alone manage.

Why retrofitted security fails

Most agentic AI platforms evolved from consumer products or developer tools. Security and compliance get bolted on after core architecture decisions are locked in. You end up with different security models for different components — the exact fragmentation you’re trying to prevent.

Every additional point solution in your AI stack multiplies your security surface area and creates gaps where data can leak or compliance can fail.

What true enterprise governance requires

True enterprise agentic AI governance isn’t about controlling the AI model itself—it’s about governing every single action an agent can take. Think of a genuine enterprise platform as the central nervous system for all your agents.

It must provide a unified security perimeter through single-tenant or private cloud deployment that completely isolates your agents and your data. It needs action-level guardrails—a strict permissioning layer that defines exactly which systems an agent can touch, what actions it can perform, and what data it can handle.

Complete auditability is non-negotiable. Every agent action must be traceable, compliant, and secure by design, with full audit logs that satisfy SOC 2, HIPAA, and GDPR requirements. And you need real-time observability and alerting—continuous monitoring of agent and user activity, with policy enforcement and alerts that catch issues before they become incidents.

WRITER connectors: Governed agent access across enterprise systems

Learn More

Pain point #3

Agentic power without central control

The real magic of agentic AI happens when you move beyond a single agent and start conducting an orchestra of them. One agent monitors your supply chain, another analyzes sales data, a third drafts executive summaries—all working in concert to achieve complex business outcomes.

But this power brings a critical question: How do you keep that orchestra from descending into chaos?

The coordination crisis

When you stitch together different agentic solutions, every agent operates in its own silo. Each has its own security model, its own data protocols, its own way of handling errors and exceptions. You’re not building an intelligent enterprise — you’re building a digital house of cards that will collapse under its own complexity.

Agents start making contradictory decisions based on different data sources. You lose visibility into which agent is doing what and when. When something goes wrong, debugging becomes impossible. You can’t enforce consistent guardrails across agent behaviors, and each new agent integration exponentially increases complexity.

This is a fast track to a new, crippling kind of technical debt — one that undermines the very value you’re trying to create with AI automation.

What orchestration demands

The full potential of an automated enterprise only materializes when your fleet of agents is managed from a single command center.

You need a unified orchestration layer — a central system that coordinates agent workflows, manages dependencies, and ensures agents work together toward business goals. Agents must be able to build on each other’s work through shared context and memory, accessing common knowledge bases and maintaining consistency across interactions.

Centralized governance becomes essential: one place to define policies, set guardrails, monitor performance, and audit actions across all agents. And critically, you need the ability to improve agent capabilities without breaking existing workflows through coordinated updates and backward compatibility.

Supervising the synthetic workforce: Observability for AI agents requires managers, not metrics

learn More

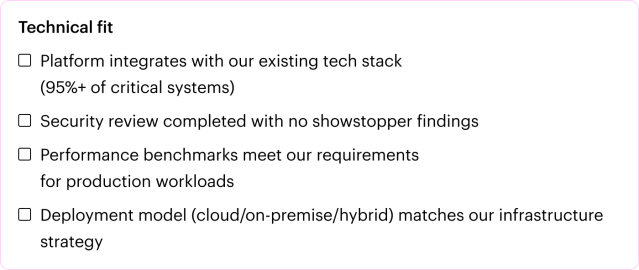

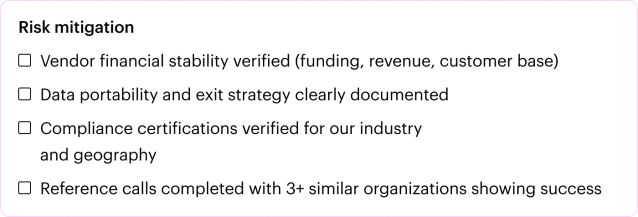

A modern framework for evaluating enterprise AI solutions

KEY TAKEAWAYS

- The “build vs. buy” decision has evolved — you’re no longer choosing an application, but selecting the platform that will be the foundation of your AI strategy

- A smart evaluation must look past model performance and dig into security, governance, scalability, user experience, and total cost of ownership

- The questions you ask vendors reveal whether they offer a real platform or rebranded point solutions

The evaluation paradox: Most enterprise software evaluations follow a familiar pattern—create requirements, demo solutions, check boxes, pick a winner. But agentic AI platforms don’t fit this model. You’re not buying software that does a specific thing. You’re choosing the architectural foundation for how your business will operate for the next decade.

The shift from tools to platform: In traditional enterprise software, you could choose best-of-breed tools for different functions and integrate them over time. With agentic AI, that approach fails. The integration complexity, security gaps, and orchestration challenges make a patchwork architecture unsustainable at scale.

This means your evaluation framework must go deeper than feature checklists. You need to understand architectural philosophy, security design, governance capabilities, and the hidden costs of different approaches.

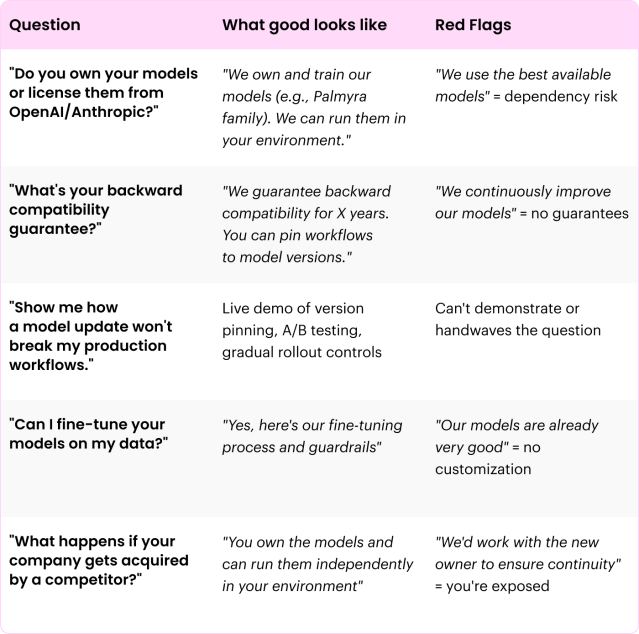

1

Model strategy and architecture

When evaluating AI platforms, most buyers start with “How good is the model?” But the more strategic question is: “How much control do I have over the model, and what happens as AI technology evolves?”

What to evaluate:

Model ownership and transparency

- Does the vendor own and train their models, or are they a wrapper on OpenAI/Anthropic/Google?

- Can you run the models in your own environment (private cloud, on-premise)?

- Do you have visibility into how models are trained, what data they use, and how they’re updated?

Why this matters: If your platform provider is dependent on a third-party LLM provider, you inherit that dependency. Your pricing, capabilities, and roadmap are at the mercy of someone else’s decisions.

Backward compatibility and version control

- What happens when the vendor releases a new model version?

- Will your existing agent workflows break or behave differently?

- Can you test new versions before deploying them to production?

- Can you pin specific workflows to specific model versions?

Why this matters: A major cause of failed enterprise AI initiatives is broken workflows after model updates. If your critical business processes rely on agents that can change behavior unpredictably, you don’t have a platform — you have a time bomb.

Fine-tuning and customization

- Can you fine-tune models on your proprietary data?

- Does the platform support domain-specific model optimization?

- Can you train models to match your brand voice, compliance requirements, and business logic?

Why this matters: Generic models provide generic results. Enterprise differentiation comes from AI that understands your business, not just general knowledge.

Questions that separate platforms from point solutions

Platform evaluation best practices: Model ownership & independence

The challenge: Model dependency creates vendor lock-in at the worst possible level—the intelligence layer. If your critical workflows depend on a model you don’t control, you’re betting your business on someone else’s roadmap, pricing changes, and deprecation decisions. But you also need the flexibility to use specialized models for specific use cases.

What separates platforms from point solutions: Most “agentic platforms” are wrappers on foundation models from OpenAI, Anthropic, or Google. When those providers change pricing, deprecate models, or update capabilities, you’re stuck adapting with no backward compatibility guarantees. True enterprise platforms provide both owned models with guaranteed backward compatibility AND the flexibility to use third-party models (including custom-trained ones) through universal model controls—managing everything through a single, unified governance layer.

What best-in-class looks like: Leading platforms own their core models, ensuring they’re never distilled or quantized post-training for consistent, predictable performance. They guarantee strict backward compatibility — new model versions never break existing workflows. AND they integrate with model platforms like Amazon Bedrock, giving you the flexibility to choose the best model for each job while maintaining unified governance, routing decisions, and compliance enforcement across all models.

Example: WRITER provides fully owned Palmyra models (including the latest Palmyra X5) with guaranteed backward compatibility, plus integration with Amazon Bedrock for third-party model access. A manufacturing company built 47 specialized agents on WRITER’s platform over 18 months. When WRITER released Palmyra X5, every agent got smarter without changing a line of code — zero breakage. Meanwhile, they can leverage Amazon Bedrock models for specialized tasks, all governed through WRITER’s unified platform.

EVALUATION QUESTIONS:

- Do you own your models or license them from third parties — and what’s your backward compatibility guarantee?

- Can I use third-party or custom-trained models when needed while maintaining unified governance?

- Show me how model updates won’t break production workflows — can I pin workflows to specific versions?

2. Enterprise-grade security and governance

The non-negotiable requirement: For Global 2000 companies, security and governance aren’t features — they’re foundational requirements. Your AI platform must be a fortress, not a convenience.

What to evaluate:

Data privacy and isolation

- Single-tenant deployment options (not just multi-tenant with “logical separation”)

- Private cloud or on-premise deployment capabilities

- Data residency controls for global compliance (GDPR, etc.)

- Zero data retention policies for sensitive operations

Why this matters: In a multi-tenant environment, you’re trusting the vendor’s infrastructure. In regulated industries or with sensitive data, that’s often unacceptable to your legal and compliance teams.

Compliance certifications

- SOC 2 Type II

- HIPAA compliance (if relevant)

- GDPR readiness

- Industry-specific certifications (FedRAMP, ISO 27001, etc.)

Why this matters: Each missing certification means months of internal security reviews, legal negotiations, and risk committee approvals. Vendors with comprehensive certifications accelerate your path to production.

AI guardrails and policy enforcement

- Can you define which systems agents can access?

- Can you specify which actions agents are permitted to take?

- Can you enforce data handling policies (PII redaction, data retention, etc.)?

- Can you set content policies (brand voice, tone, prohibited topics)?

Why this matters: Without granular guardrails, agents become uncontrolled automation—exactly the “shadow AI” problem you’re trying to solve.

Supervision suite and governance at scale

The governance challenge for agentic AI isn’t securing the platform—it’s supervising autonomous agents operating at enterprise scale. As business teams build dozens or hundreds of agents, IT needs comprehensive visibility, control, and confidence to govern this new “synthetic workforce” without creating bottlenecks.

Key supervision capabilities:

- Centralized visibility: Event-level monitoring and analytics across all agents, users, and interactions—providing a single pane of glass into your entire deployment

- Agent approval workflows: Review and approve agents before they’re deployed to production, preventing shadow AI from proliferating across the organization

- Global policies that propagate automatically: Set guardrails once at the platform level (data handling, content policies, system access), and they automatically enforce across all agents—no need to configure each agent individually

- Granular role-based permissions: Define permissions that are enforced at runtime across agents, connectors, and knowledge sources—ensuring least-privileged access without manual configuration

- Real-time alerting and anomaly detection: Automated monitoring for policy violations, unusual behavior, or performance issues—with alerts that enable proactive response

- Cost management and rate limiting: Monitor AI spend across agents and users, set rate limits centrally, leverage automatic query caching to reduce costs

- Integration with existing security tools: Monitor agents through your SIEM/observability platforms (Datadog, Splunk, Traceloop), enforce policies through security platforms (Noma, Lakera, Amazon Bedrock Guardrails)

Why this matters: Without comprehensive supervision, agent adoption creates ungovernable chaos. Business teams build agents faster than IT can review them. Agents access systems without proper permissions. Costs spiral without visibility. Supervision at scale means IT governs the entire fleet through centralized controls that scale automatically—no manual bottleneck as adoption grows.

What good looks like:

- Centralized dashboard showing all agents, their usage, performance, and costs in real-time

- Agent approval workflows that prevent deployment of ungoverned agents while enabling fast iteration

- Global policies (data handling, security, compliance) that propagate to all agents automatically

- Event-level logs providing complete auditability for every agent action

- Integration with your existing security tools so governance fits your workflows, not vice versa

Red flags:

- Governance is manual (reviewing each agent individually, configuring policies per agent)

- No centralized visibility—monitoring requires checking multiple dashboards or logs

- Agent approval is informal or non-existent (shadow AI risk)

- Policies must be configured per agent rather than set globally

- No integration with your existing security/observability tools (creating another silo)

Questions that separate platforms from point solutions

Platform evaluation best practices: Enterprise security & governance architecture

The challenge: Most agentic AI platforms evolved from consumer products or developer tools. Security and compliance are retrofitted after core architecture decisions are made, creating vulnerabilities at integration points that CISO teams can’t accept. Additionally, you need governance that scales automatically as agent adoption grows—not manual processes that create IT bottlenecks.

What separates platforms from point solutions: Patchwork approaches force you to conduct separate security reviews for each component (model API, orchestration layer, data connectors, etc.). Different vendors have different security models, and integrations create data leakage gaps. True enterprise platforms are architected from day one for Global 2000 security requirements—every component operates within a unified security perimeter with event-level audit trails, global policies that propagate automatically, granular permissions enforced at runtime, and native integration with your existing security tools.

What best-in-class looks like: Leading platforms provide comprehensive supervision suites that give IT full visibility, control, and confidence to govern agents at scale. This includes: centralized dashboards with event-level monitoring and analytics, agent approval workflows that prevent shadow AI, global guardrails that automatically block or mask sensitive data across all agents, role-based permissions with least-privileged access, and integration with your existing security platforms. One security audit covers the entire platform. SOC 2 Type II, HIPAA, GDPR, PCI compliance ready.

Example: WRITER’s platform was architected for enterprise security and governance from day one, with a supervision suite providing centralized control. A financial services firm couldn’t start pilots with other vendors until security completed separate reviews of each component. With WRITER, they conducted one comprehensive security review covering the entire unified platform — saving four months. WRITER integrates natively with observability tools (Datadog, Traceloop) and security platforms (Noma, Lakera, Amazon Bedrock Guardrails), so governance scales through existing workflows rather than creating another siloed system.

EVALUATION QUESTIONS:

- How many separate security reviews will we need to conduct across your components?

- Show me your unified security architecture — how do global policies propagate automatically?

- Can you demonstrate integration with our existing security, observability, and guardrails platforms?

3. Agentic capabilities and orchestration

Anyone can build a single agent that does one thing well. The platform test is: Can it orchestrate multiple agents into complex, coordinated workflows that deliver business outcomes?

What to evaluate:

RAG (Retrieval-Augmented Generation) Capabilities

- How does the platform connect agents to your enterprise data?

- Can it handle multiple data sources (structured and unstructured)?

- Does it maintain data freshness and relevance?

- Can you control which data sources agents can access?

Why this matters: Agents without access to your proprietary data are just expensive chatbots. RAG is the bridge between generic AI and business-specific intelligence.

Multi-Agent Orchestration

- Can agents hand off tasks to each other?

- Can you define complex workflows with decision points and branching logic?

- How are agent dependencies managed?

- What happens when one agent in a chain fails?

Why this matters: The value of agentic AI compounds when agents work together. If your platform can only support isolated agents, you’ll never achieve enterprise-scale automation.

Agent lifecycle management

Building agents is only the beginning. True enterprise platforms provide comprehensive lifecycle management — from creation through testing, deployment, monitoring, and continuous improvement.

Key lifecycle capabilities:

- Build: How are agents created and configured? Can you leverage templates, or must you build from scratch?

- Test: Are there sandbox environments where agents can be tested safely before production deployment? Can you validate behavior with test data?

- Deploy: What deployment patterns are supported? Gradual rollout, A/B testing, canary deployments, or all-at-once only?

- Monitor: How do you track agent performance, usage patterns, and business outcomes in production?

- Iterate: Can you continuously improve agents based on usage data, user feedback, and changing business needs? Is version control supported?

Why this matters: Agents aren’t “set it and forget it”—they require ongoing management and optimization. Platforms with strong lifecycle management make it easy to build better agents over time. Those without create technical debt as agents drift from optimal performance.

What good looks like:

- Visual workflow designers show agent logic at each lifecycle stage

- Sandbox environments allow safe testing with production-like data

- Deployment includes rollback capabilities if issues emerge

- Real-time monitoring shows which agents are performing vs. underperforming

- Version control allows iterating on agent logic without breaking production

Red flags:

- No testing environment—agents go straight to production

- Deployment is all-or-nothing with no gradual rollout options

- Minimal visibility into agent performance post-deployment

- Difficult to update agents once deployed

- No version history or rollback capabilities

Platform evaluation best practices: Build, activate & supervise at scale

The challenge: The real value of agentic AI comes from orchestrating multiple specialized agents into complex workflows. But the very autonomy that makesagents powerful also makes them a liability if left ungoverned. When business teams can build at scale, how does IT maintain visibility, control, and confidence? How do you democratize AI development without creating shadow AI chaos?

What separates platforms from point solutions: Point solutions excel at single-agent use cases but fail when you need to build, deploy, and supervise agents at enterprise scale. Each vendor has its own approach, creating silos and governance gaps. True enterprise platforms provide an end-to-end environment for the entire agent lifecycle — from collaborative building to deployment to comprehensive supervision — all managed from unified dashboards with centralized governance that scales automatically.

What best-in-class looks like: Leading platforms enable both business empowerment AND IT governance without tradeoffs. Business users can design agents using 100+ prebuilt templates and no-code tools, while developers extend them with custom logic. IT maintains complete oversight through centralized monitoring, event-level analytics, agent approval workflows, global policies that propagate automatically, and granular role-based permissions. Supervision scales with adoption—no manual IT effort required.

Example: WRITER provides an end-to-end platform for building, activating, and supervising AI agents at enterprise scale. With 100+ prebuilt agents and collaborative Agent Builder, teams start fast. WRITER’s newly launched Supervision Suite gives IT full visibility with event-level monitoring, agent approval workflows, and global guardrails that enforce compliance automatically. Customers like Qualcomm achieve 85% weekly agent usage with 2,400 hours saved monthly, while Prudential reaches 70% adoption—both with IT maintaining complete governance throughout.

EVALUATION QUESTIONS:

- How do you enable both business building and IT governance without forcing tradeoffs?

- Can you demonstrate centralized supervision across all agents with event-level visibility?

- How many agents can we deploy before governance becomes a manual bottleneck?

4. Platform experience & enablement

The platform paradox: Enterprise AI platforms promise to democratize AI development—empowering business users to build agents without deep technical skills. But most fail to deliver on this promise. The technical teams get powerful tools, but business users are locked out. Or business tools are accessible but so shallow that developers can’t extend them.

The strategic question isn’t just “Can this platform build agents?” It’s “Who can build agents, and what support do they need to succeed?”

True enterprise platforms eliminate the forced choice between business empowerment and technical sophistication. They provide intuitive tools for business users while giving developers the extensibility they need—all in one unified environment. And they back these capabilities with comprehensive enablement that turns platform adoption into organizational transformation.

What to evaluate:

Business user experience

The litmus test for enterprise agentic AI is simple: Can the people closest to the work — marketers, sales operations, customer success managers, HR specialists — design and maintain agents that solve their problems? Or are they dependent on IT to build everything?

Key capabilities to assess:

- No-code/low-code agent building: Visual workflow designers, template-based creation, natural language configuration—can business users create functioning agents without writing code?

- Pre-built agent library: Does the platform provide 100+ ready-to-deploy agents for common enterprise use cases, or do you start from scratch every time?

- Agent customization: Can business users modify pre-built agents to match their specific workflows, or do customizations require developer intervention?

- Collaborative development: Can business users design agent logic while developers extend it with custom integrations and advanced features — working in the same platform?

- Contextual guidance: Does the platform provide in-app help, workflow suggestions, and guardrails that guide business users to best practices?

Why this matters: The bottleneck in enterprise AI isn’t technology—it’s the backlog of use cases waiting for IT resources. If only developers can build agents, you’ll never scale beyond a handful of high-value projects. Business user empowerment is the difference between 10 agents and 1,000 agents.

What good looks like:

- Business users can deploy pre-built agents in minutes without IT involvement

- They can customize workflow logic, knowledge sources, and output formats using visual tools

- Developers can step in to add complex integrations, custom APIs, or advanced orchestration when needed

- Both groups work in the same platform with role-appropriate interfaces—no context switching

- The platform prevents risky configurations through built-in guardrails while allowing creative solutions

Red flags:

- “Our platform is low-code” but requires understanding APIs, JSON, or programming concepts

- Pre-built templates exist but can’t be customized without developer help

- Business users and developers use separate tools that don’t integrate cleanly

- The platform assumes technical sophistication (e.g., requires understanding of prompt engineering, RAG architecture, vector databases)

Developer experience and extensibility

While business users need simplicity, developers need power. A true enterprise platform provides both—without forcing tradeoffs.

Key capabilities to assess:

- Robust SDK and APIs: Is there a comprehensive software development kit for building custom agents programmatically? Are APIs well-documented, versioned, and stable?

- Extensibility framework: Can developers extend platform capabilities with custom functions, integrations, and workflows? Or are they constrained to what the vendor provides?

- Integration development: How easy is it to build custom connectors to proprietary systems or niche enterprise applications?

- Testing and debugging: Are there sandbox environments, debugging tools, and testing frameworks purpose-built for agentic workflows?

- Version control and CI/CD: Can agent configurations be managed in version control systems? Do agents support standard DevOps practices?

- Performance optimization: Can developers access performance metrics, optimize agent configurations, and fine-tune for specific use cases?

Why this matters: Your internal developers are the force multipliers who extend the platform’s capabilities to your unique business needs. A platform without a strong developer experience becomes a bottleneck—developers can only work within preset boundaries, and custom use cases stall.

What good looks like:

- Comprehensive SDK with examples, documentation, and active community support

- Developers can build, test, and deploy custom agents using familiar tools (IDEs, Git, CI/CD pipelines)

- Clear extension points for adding custom logic without modifying core platform

- Performance tuning capabilities and detailed logging for optimization

- Developer-friendly debugging tools that make it easy to trace agent behavior

Red flags:

- SDK is poorly documented or lacks critical capabilities

- Custom integrations require reverse-engineering or workarounds

- No sandbox environment—developers must test in production

- Platform treats developers as afterthought (business-user tools only)

- Frequent breaking changes to APIs without deprecation warnings

Pre-built solutions and accelerators

The fastest path to value isn’t building from scratch — it’s starting with proven solutions and customizing for your context.

Key capabilities to assess:

- Industry-specific agents: Does the platform provide domain-specific solutions (e.g., healthcare, financial services, legal) that understand industry workflows and compliance requirements?

- Common workflow playbooks: Are there pre-configured multi-agent workflows for standard enterprise processes (onboarding, procurement, campaign management, etc.)?

- Reference implementations: Can you access real customer implementations and adapt them to your needs?

- Time-to-value benchmarks: What’s the typical timeline from contract signature to first agent in production for your use case?

Why this matters: Generic platforms force you to reinvent the wheel for every use case. Platforms with deep industry expertise and proven playbooks compress time-to-value from months to weeks—and reduce implementation risk through battle-tested solutions.

What good looks like:

- 100+ pre-built agents covering common enterprise functions

- Industry-specific agent libraries (e.g., 20+ healthcare agents, 15+ financial services agents)

- Reference architectures for complex workflows (e.g., “Here’s how Company X automated their RFP process”)

- Clear metrics on how quickly customers achieve production deployment for similar use cases

Red flags:

- Small library of generic agents with minimal business logic

- No industry-specific solutions — everything is horizontal/generic

- “We can build that custom for you” (means you’re paying for what should be included)

- No proven reference implementations or customer examples

Enablement and support

Platform capabilities don’t matter if your team doesn’t know how to use them. The vendor’s enablement approach determines whether adoption succeeds or stalls.

Key capabilities to assess:

- Training programs: What onboarding and ongoing training is provided? Are there role-specific learning paths (business users vs. developers vs. administrators)?

- Certification: Can team members earn recognized certifications that demonstrate competency?

- Documentation quality: Is documentation comprehensive, current, and accessible? Are there video tutorials, interactive guides, and searchable knowledge bases?

- Professional services: What implementation support is available? Do they offer strategic consulting, not just technical setup?

- Customer success programs: Is there proactive guidance from dedicated customer success managers, or reactive support tickets only?

- Community and peer learning: Is there an active user community, customer events, and opportunities to learn from peers?

- Change management support: Does the vendor help with organizational change—executive alignment, stakeholder management, adoption planning?

Why this matters: Platform adoption is organizational transformation, not software installation. Vendors who treat it as such—providing strategic partnership, not just technical support—are the ones whose customers succeed at scale.

What good looks like:

- Structured onboarding program with clear learning paths and milestones

- Ongoing training as platform capabilities expand and your use cases evolve

- Dedicated customer success team that proactively identifies optimization opportunities

- Professional services that go beyond implementation—helping design your AI operating model

- Active customer community with regular events, peer sharing, and collaboration

Red flags:

- “Here’s the documentation” (no structured training or onboarding)

- Support tickets only—no proactive customer success engagement

- Professional services are purely technical setup without strategic guidance

- No change management or adoption planning support

- Vendor views relationship as transactional (software sale) rather than partnership

Questions that separate platforms from point solutions

Platform evaluation best practices: Enabling business-led AI transformation

The challenge: Most enterprises get trapped choosing between powerful-but-inaccessible developer tools or simple-but-shallow business tools. They’re forced to compromise: either business teams wait in IT backlogs, or they build agents in isolated tools that IT can’t govern. Meanwhile, lack of strategic enablement means platform capabilities sit unused because teams don’t know how to harness them effectively.

What separates platforms from point solutions: Point solutions optimize for one audience—either developers get sophisticated APIs but business users are locked out, or business users get templates but developers can’t extend them. Patchwork approaches try to combine separate tools but create friction through constant context-switching. True enterprise platforms provide unified environments where business users build with no-code tools, developers extend with full SDK access, and both collaborate seamlessly—backed by comprehensive enablement that drives organizational transformation.

What best-in-class looks like: Leading platforms eliminate the forced choice between accessibility and power. Business users leverage 100+ pre-built agents and visual workflow designers to solve problems autonomously. Developers extend these solutions using robust SDKs, build custom integrations, and establish reusable patterns. Both work in the same platform with role-appropriate interfaces. The vendor acts as strategic partner—providing structured training, proactive customer success, implementation services that co-create your AI operating model, and community learning that accelerates adoption across the organization.

Example: WRITER provides an end-to-end platform where business empowerment and developer sophistication coexist. Business users access 100+ pre-built agents and Agent Builder (collaborative no-code environment) to design workflows matching how work actually gets done. Developers extend these agents using WRITER’s SDK, build custom integrations, and orchestrate complex multi-agent systems—all within the unified platform. Salesforce trained 50 non-technical business users to build and maintain their own agents through WRITER’s enablement programs. Meanwhile, WRITER’s professional services team partners with enterprises to design comprehensive AI operating models—not just implement technology, but transform how organizations work—accelerating adoption from pilot to enterprise-wide impact.

EVALUATION QUESTIONS:

- Can you demonstrate a business user building and deploying an agent independently, then a developer extending it — in the same platform?

- What’s your pre-built agent library for our industry, and how quickly can we customize these solutions?

- Walk me through your enablement approach — how do you transform platform capabilities into organizational change?

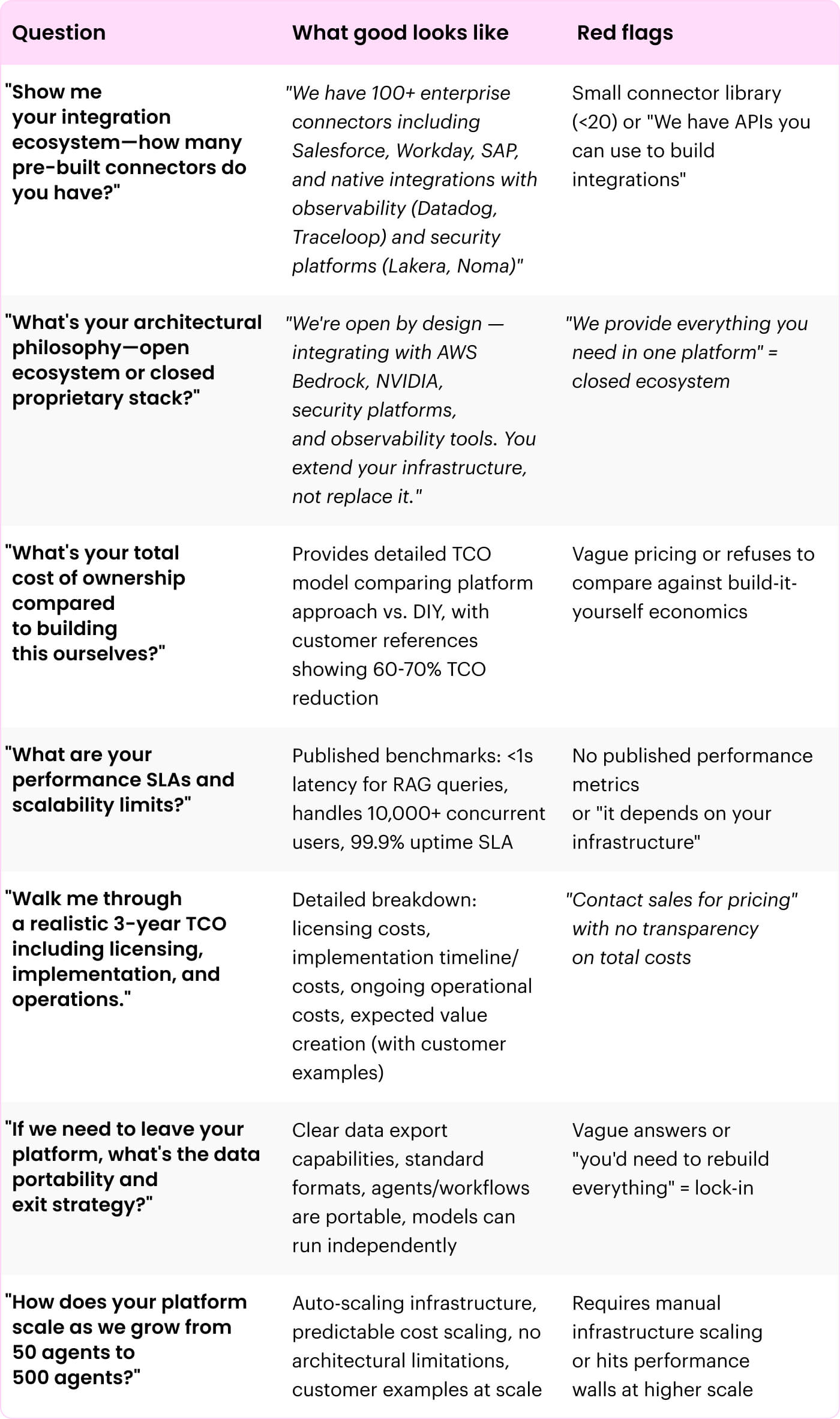

5. Infrastructure, integration & total cost of ownership

The hidden costs and lock-in risks: Most enterprise AI evaluations focus on capabilities — what can the platform do? But the strategic questions are architectural: How does it integrate with your existing infrastructure? What does it actually cost to deploy and operate at scale? And can you evolve your stack as AI technology changes, or are you locked in?

These questions matter because agentic AI isn’t a standalone application — it’s the nervous system for your entire business. It must connect to every critical system, scale with adoption, and deliver economic value that justifies the investment. And it must do this while preserving your strategic flexibility as the AI landscape evolves.

The difference between platforms that succeed at enterprise scale and those that stall comes down to three factors: architectural philosophy (open vs. closed), operational excellence (performance, scalability, reliability), and total economic impact (visible costs + hidden costs + business value created).

What to evaluate:

Platform architecture philosophy

Before evaluating technical specifications, understand the vendor’s architectural philosophy. This determines whether you’re building on a foundation that can evolve with your needs—or one that constrains your future.

Key capabilities to assess:

- Open by design vs. closed ecosystem: Does the platform integrate natively with your existing tools (observability, security, model platforms), or does it force you into a closed ecosystem where the vendor controls your entire stack?

- Vendor lock-in risks: If this vendor gets acquired, changes strategy, or discontinues the platform — what’s your exit strategy? Can you export your agents, workflows, and knowledge graphs? Can you run models independently?

- Deployment flexibility: Can you deploy how you need to — public cloud, private cloud, on-premise, hybrid, multi-cloud? Or are you constrained to the vendor’s preferred infrastructure?

- Extend vs. replace philosophy: Does this platform extend your existing infrastructure investments (data platforms, security tools, observability systems), or does it require replacing them with proprietary alternatives?

Why this matters: The AI landscape is evolving rapidly. Platforms with open architectures allow you to integrate best-of-breed tools as they emerge. Closed ecosystems lock you into one vendor’s roadmap and innovation pace—creating strategic risk when technology shifts unpredictably.

What good looks like:

- Platform is “open by design”—natively integrating with market-leading observability, security, model, and data platforms

- Clear data portability — you can export agent configurations, knowledge graphs, and workflows in standard formats

- Multi-cloud and hybrid deployment options preserve infrastructure flexibility

- Vendor articulates a philosophy of extending (not replacing) your existing investments

- Exit strategy exists — you’re not held hostage if relationship ends

Red flags:

- Vendor pushes proprietary alternatives to tools you already use

- “We’re the only platform you’ll need” messaging — red flag for closed ecosystem

- No clear data export or portability story

- Limited deployment options (e.g., only vendor’s cloud)

- Vague answers about what happens if you need to leave the platform

Integration ecosystem

Agentic AI platforms that can’t connect to your existing systems aren’t platforms — they’re expensive science experiments. True enterprise platforms provide comprehensive integration capabilities that make agents operational across your entire tech stack.

Key integration capabilities:

- Enterprise application connectors: Native integrations with core enterprise systems — Salesforce, Workday, SAP, ServiceNow, Microsoft 365, Google Workspace, Slack, etc. How many connectors are pre-built vs. requiring custom development?

- Observability platform integrations: Can you monitor agents through your existing observability tools (Datadog, Splunk, Traceloop, Dynatrace, New Relic)? Or do you need to use a separate monitoring system?

- Security platform integrations: Does the platform integrate with your security tools (Noma, Lakera, Amazon Bedrock Guardrails, Lasso) to enforce consistent policies across your AI ecosystem?

- Model platform integrations: Can you leverage model platforms like AWS Bedrock, NVIDIA, Azure OpenAI while maintaining unified governance? Or are you locked into the vendor’s models only?

- API quality and documentation: Are APIs well-designed, comprehensively documented, and versioned properly? Can your developers integrate efficiently?

- Webhook and event-driven architecture: Does the platform support real-time triggers and event-driven workflows? Can agents react to events from other systems?

- Custom connector framework: When a pre-built integration doesn’t exist, how easy is it to build custom connectors? Is there SDK support and documentation?

Why this matters: Your agentic AI platform will touch every critical system in your enterprise. The integration burden determines whether deployment takes weeks or quarters—and whether governance remains consistent across your tech stack or fragments into silos.

What good looks like:

- 100+ pre-built connectors covering your core enterprise applications

- Native integrations (not just API wrappers) with observability, security, and model platforms

- Comprehensive API documentation with code samples and SDKs

- Active ecosystem of integration partners and community-built connectors

- Clear roadmap for expanding connector library based on customer needs

Red flags:

- Small connector library requiring extensive custom development

- Integrations are shallow (basic API calls) rather than deep (semantic understanding of connected systems)

- Poor API documentation or frequent breaking changes

- “We have an API you can use to build that yourself” (means you’re doing the integration work)

- No integration with your existing observability or security tools

Performance and scalability

Platform capabilities mean nothing if performance degrades as adoption scales. Production-ready enterprise platforms deliver predictable performance under load and scale gracefully as your agentic AI footprint grows.

Key integration capabilities:

- Latency benchmarks: What are typical response times for agent queries? Simple RAG queries vs. complex multi-agent workflows? How does latency scale with concurrent users?

- Throughput capacity: How many agent interactions can the platform handle simultaneously? What’s the peak load before performance degrades?

- Concurrent user limits: How many users can interact with agents at the same time? Is there a practical limit before you need to scale infrastructure?

- Multi-agent workflow performance: When orchestrating complex workflows with 5+ agents working together, does performance remain acceptable?

- Infrastructure scaling model: As you add agents and users, what infrastructure investments are required? Does scaling happen automatically or require manual intervention?

- Performance SLAs: Does the vendor provide uptime guarantees and performance SLAs? What happens if they miss these commitments?

- Geographic distribution: Can agents be deployed across multiple regions for low-latency global access? Or is performance degraded for users far from primary data centers?

Why this matters: A slow chatbot is an annoyance. A slow agent fumbling a time-sensitive business process is a disaster. Enterprise adoption demands predictable, production-grade performance that scales as usage grows.

What good looks like:

- Latency <1 second for typical RAG queries, <5 seconds for complex multi-agent workflows

- Platform handles 10,000+ concurrent users without performance degradation

- Auto-scaling infrastructure that adapts to demand without manual intervention

- Published performance benchmarks and SLAs with clear commitments

- Geographic distribution options for global enterprises

Red flags:

- Vague answers about performance (“it depends on your use case”)

- No published benchmarks or SLAs

- Performance issues mentioned by existing customers during reference calls

- Scaling requires significant infrastructure investment or manual configuration

- Single data center deployment creates latency issues for global users

Total cost of ownership (TCO)

The sticker price of an AI platform is just the beginning. The true cost includes licensing, implementation, ongoing operations, hidden fees, and the opportunity cost of alternatives. Smart CIOs evaluate total economic impact — costs AND value created.

Comprehensive TCO analysis:

1. Licensing and subscription costs

- Pricing model: Per-user, per-agent, consumption-based, or enterprise flat-fee? How does pricing scale as adoption grows?

- Feature tiers: Are critical capabilities (Supervision Suite, advanced orchestration, model flexibility) only available at premium tiers?

- Overage charges: What happens when you exceed licensed capacity? Are overages punitive or reasonable?

- Long-term commitments: Are you locked into multi-year contracts, or can you adjust based on actual adoption and value?

Typical range: For enterprise deployments (250-500 users, 20-50 agents), annual licensing typically ranges from $500K-$2M depending on platform sophistication and included capabilities.

2. Implementation costs

- Professional services requirements: What implementation support is necessary? Can you deploy independently, or do you need vendor professional services?

- Internal resource allocation: How much internal team time (AI leads, project managers, IT, business SMEs) is required for deployment and configuration?

- Integration development: What’s the effort to integrate with your existing systems? Pre-built connectors reduce this, but custom integrations can be expensive.

- Data preparation and graph RAG setup: How much effort to connect enterprise data sources and configure graph RAG for your business?

- Change management and training: What resources are needed for organizational change management and user training?

Typical range: Implementation costs for mid-sized enterprise deployments typically range from $200K-$1M (professional services + internal resources), with timeline of 3-6 months from contract to first agents in production.

Key insight from Forrester TEI study: Organizations using platforms with pre-built infrastructure and professional services deploy in 8 weeks vs. 18+ months for DIY approaches — avoiding hiring 6+ specialized FTEs for ongoing platform management.

3. Ongoing operational costs

- Maintenance and support: What are annual maintenance fees and support costs beyond initial licensing?

- Infrastructure hosting: If you’re responsible for hosting, what are cloud infrastructure costs? If vendor-hosted, are there capacity or usage charges?

- Model usage fees: Are there consumption charges for model API calls, or is usage included in licensing?

- Data storage and retention: As knowledge graphs grow and agent interactions are logged, what storage costs accumulate?

- Internal team for ongoing management: How many FTEs are required for platform administration, monitoring, and optimization?

Typical range: Ongoing operational costs (beyond licensing) typically add 20-30% to annual TCO, including support fees, incremental infrastructure, and 1-2 FTEs for platform management.

4. Hidden costs and fees

- Integration ongoing maintenance: Each integration requires updates when APIs change—what’s the ongoing effort to maintain connectors?

- Model fine-tuning and optimization: If you need to fine-tune models or optimize performance, what are the costs?

- Scaling infrastructure: As you add agents and users, what incremental infrastructure investments are required?

- Vendor lock-in switching costs: If you need to change platforms later, what’s the effort to migrate agents, data, and workflows?

Key insight: Hidden integration costs are typically 3-5x higher than initial estimates when using point solutions or DIY approaches. Platforms with comprehensive connector libraries dramatically reduce this burden.

5. Total Economic Impact (Costs + Value)

The most sophisticated TCO analysis includes the value created — not just costs incurred.

- Value created (from Forrester TEI study of enterprise WRITER deployments):

- Labor efficiencies: 200% productivity improvement for marketing teams = $10M over 3 years

- Agency cost avoidance: 50% reduction in agency scope = $5M over 3 years

- Compliance and brand standards: 85% faster review time = $100K over 3 years

- Faster onboarding: 65% reduction in onboarding time = $56K over 3 years

- Legacy environment savings: Consolidating AI tools = $492K over 3 years

- Total benefits: $15.63M over 3 years (present value)

- Total costs: $3.61M over 3 years (present value)

- Net present value: $12.02M

- ROI: 333%

- Payback period: <6 months

Why this matters: A platform that costs $2M annually but delivers $5M in value has a negative “cost” — it’s a profit center. Cheap platforms that deliver minimal value are actually more expensive. Always calculate total economic impact, not just purchase price.

6. Build vs Buy economics

The alternative to buying a platform is building your own. Here’s the realistic comparison:

- DIY approach (building your own agentic AI infrastructure):

- Upfront build: 12-18 months with 5-8 specialized engineers ($200K+ each) = $1M-$1.6M annually

- Ongoing maintenance: 3-5 FTEs permanently = $600K-$1M annually

- Integration development: Every connector is custom-built = $50K-$200K per integration

- Hidden costs: Talent retention in hyper-competitive market, technology obsolescence risk

- Opportunity cost: Engineering team focused on infrastructure instead of business differentiation

- Total DIY TCO over 3 years: $5M-$8M for infrastructure alone, not including business logic and agents

- Platform approach:

- Licensing: $500K-$2M annually

- Implementation: $200K-$1M one-time

- Ongoing operations: 1-2 FTEs = $200K-$400K annually

- Total Platform TCO over 3 years: $2.5M-$5M (60-70% lower than DIY)

Key advantage: Platform approach delivers production-ready capabilities in weeks vs. quarters, with enterprise-grade security, governance, and performance built in.

Questions that separate platforms from point solutions

Platform evaluation best practices: Infrastructure & interoperability

The challenge: Most enterprises get trapped choosing between powerful-but-inaccessible developer tools or simple-but-shallow business tools. They’re forced to compromise: either business teams wait in IT backlogs, or they build agents in isolated tools that IT can’t govern. Meanwhile, lack of strategic enablement means platform capabilities sit unused because teams don’t know how to harness them effectively.

What separates platforms from point solutions: Point solutions optimize for one audience—either developers get sophisticated APIs but business users are locked out, or business users get templates but developers can’t extend them. Patchwork approaches try to combine separate tools but create friction through constant context-switching. True enterprise platforms provide unified environments where business users build with no-code tools, developers extend with full SDK access, and both collaborate seamlessly—backed by comprehensive enablement that drives organizational transformation.

What best-in-class looks like: Leading platforms eliminate the forced choice between accessibility and power. Business users leverage 100+ pre-built agents and visual workflow designers to solve problems autonomously. Developers extend these solutions using robust SDKs, build custom integrations, and establish reusable patterns. Both work in the same platform with role-appropriate interfaces. The vendor acts as strategic partner—providing structured training, proactive customer success, implementation services that co-create your AI operating model, and community learning that accelerates adoption across the organization.

Example: WRITER provides an end-to-end platform where business empowerment and developer sophistication coexist. Business users access 100+ pre-built agents and Agent Builder (collaborative no-code environment) to design workflows matching how work actually gets done. Developers extend these agents using WRITER’s SDK, build custom integrations, and orchestrate complex multi-agent systems—all within the unified platform. Salesforce trained 50 non-technical business users to build and maintain their own agents through WRITER’s enablement programs. Meanwhile, WRITER’s professional services team partners with enterprises to design comprehensive AI operating models—not just implement technology, but transform how organizations work—accelerating adoption from pilot to enterprise-wide impact.

EVALUATION QUESTIONS:

- Can you demonstrate a business user building and deploying an agent independently, then a developer extending it — in the same platform?

- What’s your pre-built agent library for our industry, and

how quickly can we customize these solutions? - Walk me through your enablement approach — how do you transform platform capabilities into organizational change?

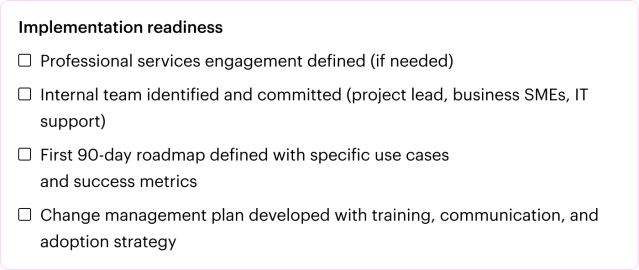

What successful enterprise adoption actually looks like

KEY TAKEAWAYS

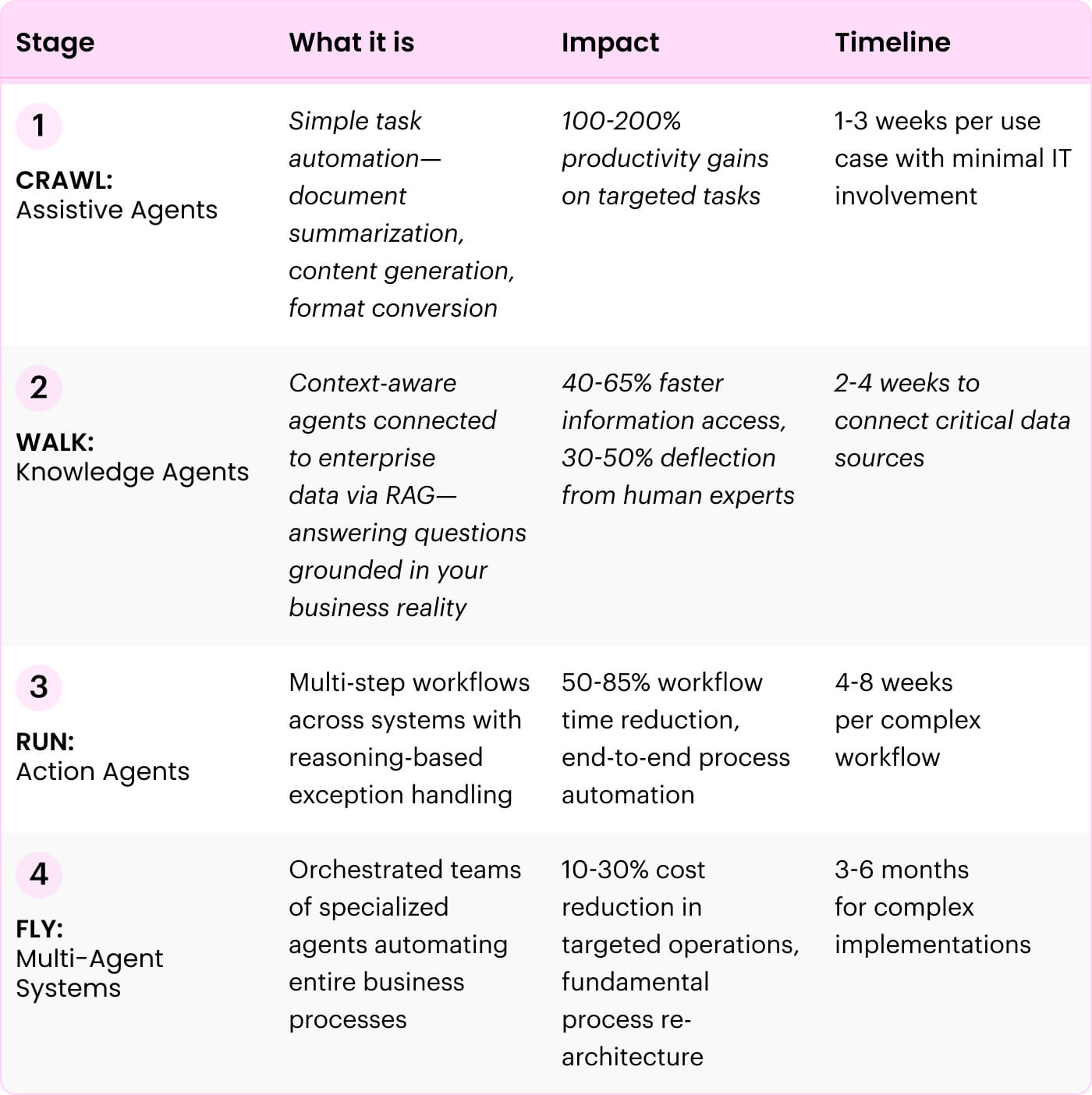

- Enterprise agentic AI adoption follows a predictable “Crawl, Walk, Run, Fly” maturity curve—successful deployments build momentum through wins at each stage rather than attempting complex automation on day one

- Independent Forrester research validates 333% ROI over three years with less than 6-month payback—but only for organizations that treat this as organizational transformation, not just technology implementation

- The 95% of AI initiatives that fail share common patterns: starting too complex, treating as IT project, insufficient governance, neglecting change management, and measuring wrong metrics

The evaluation paradox part two: You’ve assessed platforms across five critical dimensions. You’ve asked the tough questions. You’ve seen the demos. Now comes the harder question every CIO faces: What does successful deployment actually look like at enterprise scale?

The data reveals a critical gap. According to PwC’s May 2025 AI Agent Survey of 300 senior executives, 79% of companies are already adopting AI agents and 66% report measurable productivity gains. Investment is surging — 88% plan to increase AI budgets in the next 12 months.

But here’s what separates early adopters from true transformation: Most organizations are using agents for routine task automation, not operational re-architecture. Only 45% are fundamentally rethinking operating models, and just 42% are redesigning core processes around AI agents. The result? Broad adoption but shallow impact.

The strategic question isn’t “Are we using AI agents?” It’s “Are we using them to re-architect how work gets done?”

The organizations achieving transformational results share common patterns in how they approach maturity, measure success, and scale from pilots to production.

This section provides a realistic roadmap based on actual enterprise deployments, including timelines, resource requirements, and the metrics that separate transformation from theater.

The agentic maturity framework: Your journey from pilots to production

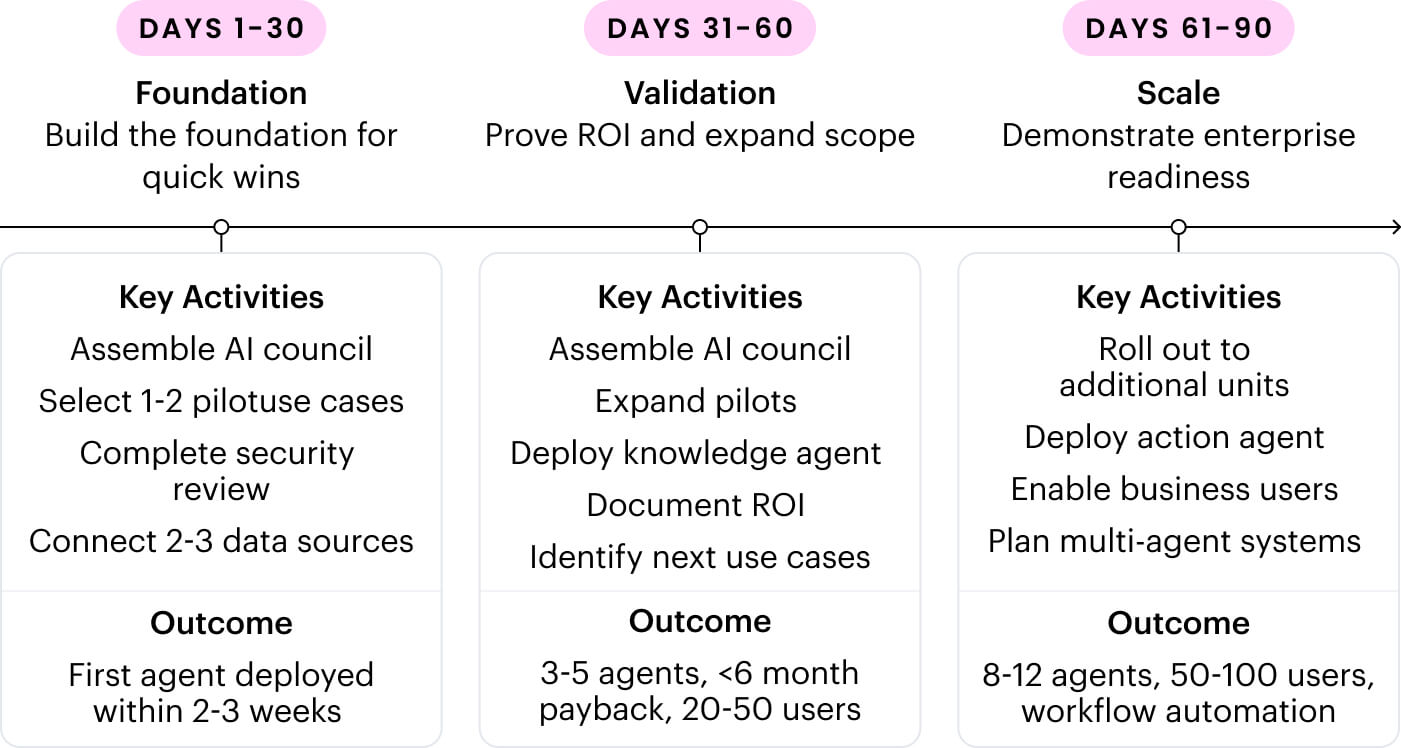

The first 90 days determine whether you join the 79% of organizations with AI agents or the 45% achieving true operational transformation. Based on analysis of successful enterprise deployments, this timeline provides realistic expectations for moving from platform selection to measurable business value.

The pattern is consistent: Organizations that secure quick wins in weeks 1-8, validate ROI in months 2-3, and demonstrate scaling capability by day 90 build the momentum, internal champions, and executive confidence needed for enterprise-wide adoption. Those that over-plan, delay deployment, or skip the “crawl” stage typically stall in pilot purgatory.

Your first 90 days: What to expect

The first 90 days determine whether you join the 79% of organizations with AI agents or the 45% achieving true operational transformation. Based on analysis of successful enterprise deployments, this timeline provides realistic expectations for moving from platform selection to measurable business value.

The pattern is consistent: Organizations that secure quick wins in weeks 1-8, validate ROI in months 2-3, and demonstrate scaling capability by day 90 build the momentum, internal champions, and executive confidence needed for enterprise-wide adoption. Those that over-plan, delay deployment, or skip the “crawl” stage typically stall in pilot purgatory.

CUSTOMER SPOTLIGHT

Prudential’s journey to agentic AI market teams with generative AI

From a clunky homegrown tool to an enterprise platform

Prudential, a 150-year-old global financial leader, faced a challenge familiar to many established enterprises: balancing a legacy of trust and regulation with urgent digital transformation needs. Their global marketing organization needed to scale personalized customer experiences in a highly complex, regulated B2B environment.

Their AI journey began with a homegrown solution that highlighted exactly what a siloed approach creates. They had one tool for content generation and another, separate tool for pre-compliance checks. The user interface was unfriendly, the workflow was disjointed, and the output was poor. This inefficient process created significant delays—content was frequently kicked back from compliance, extending cycle times and frustrating teams.

Their vendor evaluation criteria were clear:

- Secure, proprietary model (non-negotiable for legal and risk teams)

- Multilingual capabilities for international businesses

- Deep integration with core Martech stack (Adobe, Salesforce) for lead generation workflows

After testing multiple solutions, Prudential chose WRITER.

The solution: An agentic platform for business intelligence

Prudential started with content generation but quickly moved beyond basics to embrace agentic AI. Their most powerful use case is a voice-of-customer (VOC) analysis agent that executes an entire, complex business intelligence workflow autonomously:

Step 1: It ingests the data

The agent takes in a massive Excel file with thousands of unstructured customer comments from their Medallia feedback platform.

Step 2: It performs the analysis

The agent analyzes all 4,000+ rows of customer verbatims, automatically identifying and categorizing key themes, sentiment, and trends hidden in raw text.

Step 3: It delivers the strategy

The agent generates strategic recommendations for the business, suggesting concrete actions to improve customer satisfaction or fix website problems.

IMPACT

This entire workflow, which previously took analysts days of manual work, now completes in minutes. The team’s speed to deliver actionable insights increased by 50%.

CIO lessons learned

Lily Raymond (Global Marketing Strategy) and Ashley Chiretis (Director of AI) learned critical lessons every CIO should hear:

1

Don’t automate a broken process

“The biggest mistake you can make is just layering AI on top of a bad workflow. The real win comes when you truly deeply understand the process and really reimagine it. Use agentic AI as your chance to redesign how work gets done from scratch.”

2

Talk about productivity, not “efficiency”

To get teams on board, especially creative and knowledge workers, words matter. Prudential avoids corporate-speak of “efficiency” and instead talks about “productivity”—giving people time back for strategic, fulfilling work. As Ashley says, ”AI does not replace jobs right now. It replaces tasks.”

3

Let your people build

You don’t need a central IT team to build every AI solution. Prudential is training its marketing team to build their own agents using WRITER’s AI Studio. Ashley, who is not a technologist, built an AI matchmaking app for an internal mentoring program in less than an hour. When you give business experts user-friendly tools, you scale innovation across the company.

Additional results

Lily Raymond (Global Marketing Strategy) and Ashley Chiretis (Director of AI) learned critical lessons every CIO should hear:

faster

campaign

time-to-market

adoption rate

across marketing

organization

boost

in creative

capacity

Why traditional ROI models fail for agentic AI

Traditional ROI models focus narrowly on cost savings and task efficiency: ”How much time did we save?” This factory-floor thinking completely misses the exponential value of agentic AI.

The critical distinction

- Generative AI ROI: Task-level automation (write faster, create content quicker)

- Agentic AI ROI: Outcome-level automation (complete entire workflows autonomously)

Agentic AI isn’t just a tool—it’s an extension of your team. It understands complex business objectives, creates execution plans, and autonomously delivers results across multiple systems. This fundamental difference demands a new measurement framework.

When you measure only “hours saved,” you miss the strategic value: revenue from faster time-to-market, cost avoidance from preventing errors before they occur, competitive advantage from experimentation velocity, and employee retention from eliminating soul-crushing repetitive work.

The four-pillar measurement framework

PILLAR 1

Efficiency & employee productivity

What to measure:

- Complete process time (before vs. after)

- Scale multiplier (how many more processes employees can manage)

- Strategic capacity (time freed for high-value work)

Formula: (Process volume × time savings × hourly rate) + (new processes × revenue)

EXAMPLES

Marketing team creates 50 campaigns/year, each requiring 22 hours. With agentic AI: 6 hours per campaign.

- Time saved: 50 × 16 hours × $75/hour = $60,000/year

- Scale enabled: Now handles 80 campaigns/year = 30 additional × $50K = $1.5M additional revenue

- Total Pillar 1 value: $1.56M/year

Forrester TEI validation: 200% improvement, $10M over 3 years

PILLAR 2

Revenue generation & business growth

What to measure:

- Time-to-market acceleration

- Opportunity capture (24/7 agents uncovering revenue opportunities)

- Market share expansion from speed advantage

Formula: (Revenue from accelerated launches × market timing) + (new opportunities × conversion × deal size)

EXAMPLES

Software company launches quarterly features. With agentic development/testing agents: monthly releases.

- Market timing advantage: 3-month lead = 25% higher adoption for new features

- Revenue impact: $100M product line × 5% adoption improvement = $5M/year

- New opportunities: Agents identify 50 upsell opportunities/month × 5% conversion × $10K = $3. 125M/year

- Minus cannibalization (20%) = Net Pillar 2 value: $6.5M/year

Real-world examples: CirrusMD 234%, Prudential 70% faster, Adore Me 40% traffic growth

PILLAR 3

Risk mitigation & regulatory compliance

What to measure:

- Cost of prevented failures (brand damage, legal exposure, compliance fines)

- Review cycle time reduction

- Error rate reduction

Formula: (Prevented incidents × cost) + (review time saved × cost) + (error reduction value)

EXAMPLES

Legal team reviews 500 contracts/year averaging $50K value each. Agentic contract analysis:

- Prevented issues: Catch 10 major clause problems/year × $500K cost each = $5M/year

- Review acceleration: 7 days → same day = 500 contracts × 6 days × $300/day legal cost = $900K/year

- Error reduction: 5% fewer contract disputes × 500 contracts × $15K avg cost = $375K/year

- Minus platform cost ($2M) = Net Pillar 3 value: $4.275M/year

Forrester TEI validation: 85% faster reviews, $100K over 3 years

PILLAR 4

Business agility & innovation

What to measure:

- Organizational learning rate (how fast you test and learn)

- Experimentation velocity (tests per quarter)

- Employee engagement (retention from eliminating repetitive work)

Formula: (Faster experiments × conversion × revenue) + (retention × replacement cost) + (competitive advantage)

EXAMPLES

E-commerce company runs A/B tests. With agentic test design/analysis:

- Test velocity: 50 tests/year → 200 tests/year

- Winning tests: 150 additional × 10% find improvement × 2% conversion lift × $10M base = $3M/year

- Employee retention: Automation prevents 5 data analyst departures/year × $120K replacement cost = $600K/year

- Competitive response: Faster iteration = 6-month lead on competitors = $450K market share protection

- Total Pillar 4 value: $4.05M/year

Forrester TEI validation: 65% faster onboarding, $492K savings

How to apply this framework

Step 1

Select 2-3 high-impact processes to measure across all four pillars

Step 2

Establish baseline metrics (current state before agentic AI)

Step 3

Deploy agents and measure for 90 days

Step 4

Calculate total strategic value:

- Pillar 1 (efficiency) + Pillar 2 (revenue) + Pillar 3 (risk avoided) + Pillar 4 (agility)

- Subtract platform costs and implementation costs

Step 5

Project annualized value and present to CFO/Board

Example Total ROI: $1.56M + $6.5M + $4.275M + $4.05M = $16.385M/year across all four pillars

This is why enterprises achieving 333% ROI focus on outcome automation, not task efficiency.

Top failure patterns to avoid

1

Starting too complex:

Build momentum with quick wins before

complex automation.

2

Treating as IT project:

Involve business process owners from day one.

3

Insufficient governance:

Establish policies and approval workflows from the start.

4

Neglecting change management:

Invest in training, celebrate wins, create champions.

5

Measuring wrong metrics:

Track business outcomes, not “AI usage” metrics.

For comprehensive guidance on successful deployment, download WRITER’s Business Leaders Guide to Agentic AI.

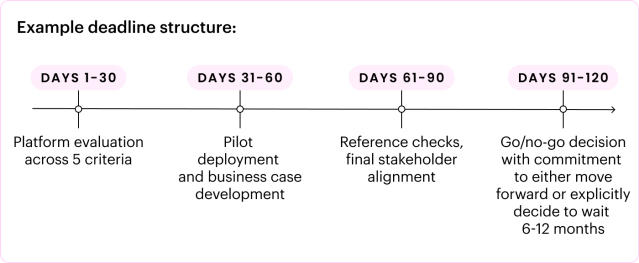

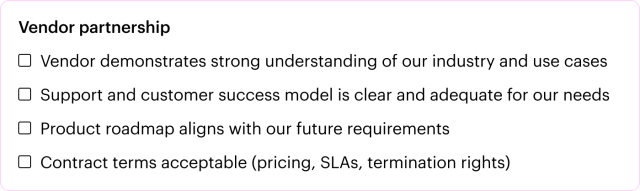

How to move from evaluation to decision

KEY TAKEAWAYS

- The hardest part of enterprise AI decisions isn’t evaluating options — it’s navigating internal stakeholders with competing concerns

- Your business case must address three constituencies simultaneously: Board (strategic risk), CFO (financial ROI), CISO (security/compliance)

- The “perfect” decision doesn’t exist—successful CIOs choose platforms that minimize regret while maximizing learning velocity

You’ve evaluated platforms. You’ve built your comparison framework. You understand the technology. But you’re stuck.

Not because you don’t know which platform is best. You’re stuck because:

- Your CFO wants ironclad ROI proof before you’ve even piloted

- Your CISO won’t sign off until they’ve reviewed every security control (which takes 6 months)

- Your business units are building shadow AI agents because your evaluation is taking too long

- Your board wants a 3-year AI strategy but the technology changes every 3 months

This is the reality of enterprise AI decisions today. The technical evaluation is the easy part. The hard part is building consensus across stakeholders with fundamentally different concerns and timelines.

This section provides a decision framework and answers to the toughest objections you’ll face internally — so you can move from analysis paralysis to confident action.

The three-constituency business case

Enterprise AI platform decisions require simultaneous buy-in from three groups with non-overlapping priorities:

FOR THE BOARD

Strategic transformation and competitive advantage

- What they care about: Is this defensive (catching up) or offensive (building sustainable advantage)? How does agentic AI enable our business strategy, not just improve operations?

- The case to make: According to Gartner, 50% of all knowledge workers will work with, govern, or build their own agents within two years. The organizations that establish agentic AI platforms now will fundamentally re-architect their operations while competitors are still running pilots. This creates compounding advantage—your agents get smarter through continuous learning, your teams get faster at building new capabilities, and your cost structures allow pricing competitors can’t match.

- Frame as “operational re-architecture,” not “technology adoption.” You’re building the foundation for how your business operates in an AI-native world. The question isn’t whether to invest, but whether to lead the transformation or follow it.

- Key metrics for Board:

- Strategic capacity unlocked (executive time freed for growth initiatives)

- Competitive positioning (what you can do that competitors cannot)

- Market response speed (launch products, enter markets, respond to moves)

- Organizational learning rate (how fast you’re getting better at AI)

FOR THE CFO

Financial returns and risk-adjusted value

- What they care about: Payback period, hidden costs, ROI confidence, downside scenarios if adoption fails.

- The case to make: Forrester’s independent TEI study (April 2025) projects 333% ROI over three years with <6 month payback for WRITER deployments.

- Three-year financial model:

- Strategic capacity unlocked (executive time freed for growth initiatives)

- Competitive positioning (what you can do that competitors cannot)

- Market response speed (launch products, enter markets, respond to moves)

- Organizational learning rate (how fast you’re getting better at AI)

- Benefits: $10.0M labor efficiencies, $5.0M agency cost avoidance, $100K compliance improvements, $56K faster onboarding, $492K legacy savings

- Costs: $2.0M subscription, $2.1M implementation (internal team time), $82K training

- Risk mitigation: Phased approach limits downside. First 90 days cost <$200K with measurable ROI. If pilots fail, you’ve learned cheaply. If successful, scale with proven value.

- Alternative cost: Building in-house requires $5M-$8M over three years plus hiring 5-8 specialized FTEs at $200K+ each, with higher failure risk.

FOR THE CISO

Security, compliance, and governance at scale

- What they care about: New vulnerabilities, shadow AI prevention, compliance maintenance, security review burden.

- The case to make: The biggest security risk isn’t the platform — it’s ungoverned agents business teams are already building using consumer tools outside IT visibility. An enterprise platform like WRITER gives centralized governance over all AI activity, preventing shadow AI while enabling innovation.

- One security review, permanent governance: Unified architecture needs one comprehensive assessment covering model hosting, data access, agent permissions, and observability. Compare to patchwork approaches requiring separate reviews per component.

- Compliance by design: SOC 2 Type II, HIPAA, GDPR, PCI certifications accelerate legal approval vs. retrofitted security requiring extensive vetting.