Innovation

– 17 min read

Demystifying the White House Executive Order on AI

What enterprise leaders need to know

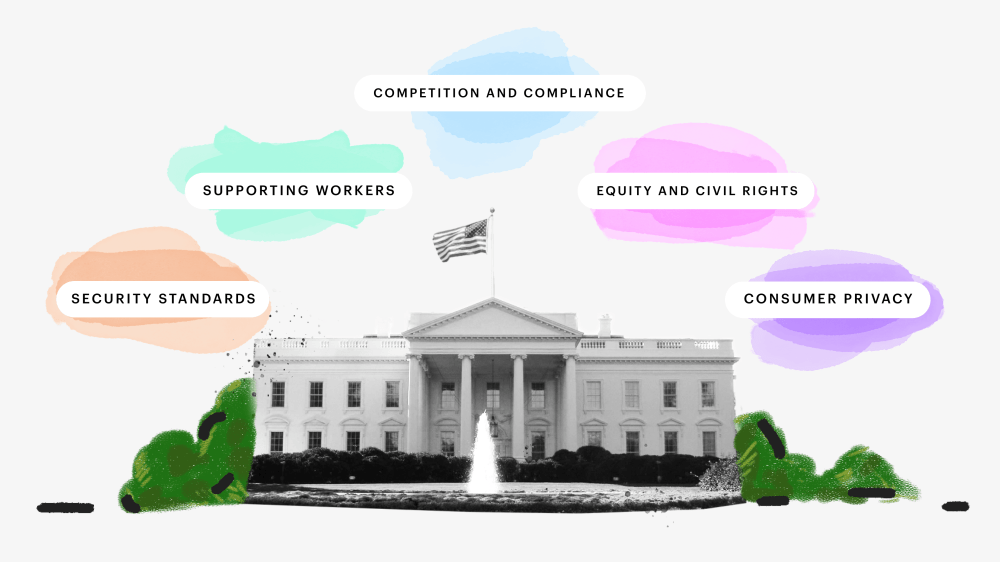

This week, the White House issued the first-ever Executive Order on Safe, Secure, and Trustworthy Artificial Intelligence. At WRITER, we have been aligning our approach with the principles and priorities outlined in the Order since our inception. We prioritize safety, security, responsible innovation, and collaboration. Our software undergoes robust evaluations, testing, and risk mitigation measures to ensure it functions as intended, is resilient against misuse or dangerous modifications, and complies with applicable federal laws and policies.

As the first-ever executive order on AI, the Biden-Harris Order signifies the importance of responsible AI development and use. It’s incumbent upon us to make it easy and obvious for companies using our software to prove their compliance to regulatory bodies that will enforce the Act. We understand that companies may need to demonstrate that the software they use, such as WRITER, adheres to the regulations outlined in the Order.

While the certification process may involve the emergence of new companies and protocols, companies shouldn’t stand on the sidelines. Instead, they should actively work with us to ensure compliance and responsible AI use. We’re committed to supporting companies in navigating the evolving regulatory landscape and providing the necessary tools and resources to meet the requirements set forth in the Order.

We’re dedicated to promoting a fair, open, and competitive ecosystem for AI and related technologies. We invest in AI-related education, training, research, and development to support the growth of AI talent and innovation. By working together, we can drive responsible AI development, protect the interests of users, advance equity and civil rights, and ensure privacy and civil liberties are safeguarded.

WRITER has pored through all 19,000 words of the Executive Order so you don’t have to. Let’s examine the key takeaways from the Order, the implications for enterprise companies, and the actions you can take today in response. We’ll also discuss how WRITER has already set the standard for safe, secure, and trustworthy AI. Finally, we’ve provided resources for you to gain a deeper understanding of these issues and how to address them at your organization.

- The White House Executive Order on AI emphasizes the importance of responsible AI development and use, and companies should actively work towards compliance and responsible AI use.

- The Order focuses on creating new safety and security standards for AI, including sharing safety test results with the government and establishing rigorous standards for AI system testing.

- The Order promotes innovation and competition in the AI industry through initiatives like the National AI Research Resource program and support for small businesses.

- Protecting consumer privacy is a key aspect of the Order, with a focus on transparency, privacy-enhancing technologies, and compliance with federal nondiscrimination laws.

- The Order addresses algorithmic bias and discrimination, aiming to advance equity and civil rights in various sectors impacted by AI. WRITER proactively mitigates AI bias and ethical concerns through rigorous auditing and fine-tuning processes.

Table of contents

- Creating new safety and security standards for AI

- Promoting innovation and competition

- Protecting consumer privacy

- Advancing equity and civil rights

- Supporting and protecting workers

- Prepping your organization for the White House Executive Order on AI

- WRITER is built on a foundation of safety, trust, and innovation for the enterprise environment

Creating new safety and security standards for AI

The Executive Order outlined two key measures that have implications for enterprise companies working with large language model (LLM) vendors. First, it requires developers of powerful AI systems to share safety test results and critical information with the U.S. government. This means that companies developing foundation models with potential risks to national security, economic security, or public health and safety must notify the government during the model training process and share the results of red-team safety tests. This requirement aims to ensure that AI systems are safe, secure, and trustworthy before they are made public.

Secondly, the Executive Order emphasizes the development of standards, tools, and tests to ensure the safety, security, and trustworthiness of AI systems. The National Institute of Standards and Technology (NIST) will establish rigorous standards for extensive red-team testing to ensure safety before public release. The Department of Homeland Security will apply these standards to critical infrastructure sectors and establish the AI Safety and Security Board. Additionally, the Departments of Energy and Homeland Security will address AI systems’ threats to critical infrastructure and various risks such as chemical, biological, radiological, nuclear, and cybersecurity risks. These actions represent significant steps taken by the government to advance the field of AI safety.

How WRITER sets the standard for safe, transparent generative AI development

Our techniques and methodologies aim to provide a comprehensive framework for understanding and auditing AI systems, meeting the objectives set forth in the Executive Order.

- WRITER employs a multi-tiered approach to provide insights into decision-making processes.

- Tools and algorithms like attention mechanism analysis and SHAP values offer visibility into the inner workings of the model.

- Comprehensive logging and provenance tracking enable real-time monitoring and auditing.

- Our commitment to open-source and community auditing promotes transparency and accountability.

Resources on selecting generative AI vendors and safe AI implementation

How to evaluate LLM/AI vendors for enterprise solutions

Make informed decisions when selecting AI vendors. Prioritize safety, protect sensitive information, and ensure compliance with regulations. This blog post serves as a comprehensive resource to navigate the complexities of vendor evaluation and ensure the safety and well-being of organizations and stakeholders.

The business leader’s guide to adopting generative AI

Get insights and strategies on common use cases for AI, challenges in integration, assessing existing workflows, planning change management, establishing governance structures, and maintaining brand safety. This guide can help you make the transition to AI easier by providing guidance on how to reduce risks and ensure secure and responsible implementation of generative AI technology.

Promoting innovation and competition

The Executive Order includes several efforts to promote innovation and competition in the field of AI. It directs the National Science Foundation (NSF) to launch a pilot program called the National AI Research Resource (NAIRR) to provide computational, data, and training resources for AI research and development. The Order also establishes NSF Regional Innovation Engines and National AI Research Institutes to prioritize AI-related work and foster innovation.

Additionally, the Order promotes competition in the semiconductor industry by implementing flexible membership structures, mentorship programs, and increased resources for startups and small businesses. The Small Business Administration is directed to prioritize funding for AI-related initiatives, and the Federal Trade Commission is encouraged to ensure fair competition in the AI marketplace. These efforts aim to foster innovation, support small businesses, and promote competition in the AI industry.

How WRITER supports generative AI innovation and competition

- WRITER is the only full-stack generative AI platform with the quality and security required in the enterprise, providing a competitive advantage in the market.

- The WRITER platform consists of Palmyra (WRITER-built LLMs), Knowledge Graph, and AI guardrails, offering a comprehensive solution for businesses to leverage generative AI.

- WRITER offers AI apps, composable UI options, chatbots, integrations, and APIs, enabling businesses to innovate and customize their AI applications.

- Our focus on security, data privacy, and compliance, including adherence to SOC 2 Type II, GDPR, CCPA, HIPAA, and PCI, ensures a competitive edge in terms of trust and regulatory compliance.

- Our customers, such as Accenture, Vanguard, 6sense, Commvault, and healthcare companies, have achieved significant business impact by leveraging WRITER generative AI capabilities.

- Our plans for the future include developing industry-specific LLMs, AI agents, enterprise multi-modality LLMs, and expanding our international presence, further driving business innovation and competition.

Resources for enterprise generative AI innovation

Big book of enterprise generative AI use cases

This guide is a comprehensive resource for exploring the immense opportunities of generative AI. It offers practical insights and real-world case studies to accelerate growth, increase productivity, and ensure governance across all functions of your business. By leveraging the power of generative AI and implementing the strategies outlined in this guide, you can gain a competitive edge and drive real business impact in the rapidly evolving landscape of AI technology.

How enterprise companies can map generative AI use cases for fast, safe implementation

Discover a strategic framework to identify and implement AI use cases. Get practical insights on how to use generative AI technology to drive productivity, improve customer experiences, and achieve measurable business outcomes. By following the instructions given in this guide, you can maximize AI’s capabilities, stay ahead of the competition, and gain an advantage in the ever-changing landscape of generative AI.

Protecting consumer privacy

Section 8 of the Executive Order emphasizes the protection of consumers, patients, passengers, and students in relation to AI. It calls for independent regulatory agencies to address risks associated with AI, including fraud, discrimination, privacy threats, and financial stability. The section highlights the importance of transparency in AI models, responsible use of AI in healthcare and human services, and compliance with federal nondiscrimination laws.

Section 9 focuses on the protection of privacy in the context of AI. It directs the Director of the Office of Management and Budget (OMB) to evaluate commercially available information (CAI) that contains personally identifiable information and develop guidelines for agencies to mitigate privacy risks associated with AI. The section also emphasizes the use of privacy-enhancing technologies (PETs) and the development of guidelines, tools, and practices to support their implementation.

Section 10 aims to advance the use of AI within the federal government. It establishes an interagency council to coordinate AI development and use across agencies, and the Director of OMB is responsible for issuing guidance to strengthen the effective and appropriate use of AI, manage risks, and promote innovation. The section also addresses the need for AI governance boards, risk management practices, tracking and assessment methods, and prioritizing AI projects within agencies.

How WRITER protects consumer privacy

At WRITER, we’ve protected consumer privacy and data security from day one. Our commitment to privacy and data security aligns with the principles outlined in the Executive Order, ensuring the protection of consumer privacy in the context of AI.

Privacy isn’t just a legal requirement for us — it’s a core value that drives our mission. We’ve developed an enterprise-grade AI writing platform as a secure alternative to consumer tools, ensuring that your data is handled with the utmost care and respect.

- The WRITER generative AI platform ensures that user data isn’t used to train its large language models, and what users write in their online tools stays there.

- WRITER follows enterprise-grade security practices, including secure access management, encryption of data at rest and in-transit, and network security measures.

- The WRITER infrastructure is hosted on Google Cloud Platform (GCP) and complies with GCP’s security practices.

- WRITER has undergone audits and received certifications such as SOC 2 Type II, GDPR, CCPA, HIPAA, PCI, and Privacy Shield, demonstrating its commitment to compliance with privacy and security standards.

- WRITER conducts regular vulnerability audits, security pen tests, and has dedicated security personnel overseeing security, privacy, access, reliability, and disaster response.

- WRITER provides transparency about its privacy practices and offers a comprehensive privacy policy that outlines how customer data is processed and protected.

Resources for protecting consumer privacy in the age of AI

Making the most of AI without compromising data privacy

Get practical tips and strategies for assessing generative AI software, understanding security and privacy features, developing corporate policies, and training your employees on data privacy. By following the guidance in this guide, you can ensure the responsible use of AI, safeguard sensitive consumer information, and comply with the privacy regulations set forth in the Executive Order.

Every company needs a corporate AI policy

This post is your essential guide to creating a corporate AI policy, empowering you as an enterprise leader to protect consumer privacy in line with the Executive Order. It provides step-by-step instructions on assessing AI needs, establishing a governance framework, educating employees, monitoring AI performance, and implementing regular policy reviews.

Advancing equity and civil rights

The Executive Order addresses algorithmic bias and discrimination in AI. It tells the Attorney General to work with agencies to prevent civil rights violations and discrimination related to AI, especially in the criminal justice system. The Order says agencies need to use their civil rights and civil liberties offices to prevent and address unlawful discrimination resulting from the use of AI in federal programs and benefits administration.

Additionally, it encourages the use of appropriate methodologies, including AI tools, to evaluate and minimize bias in housing markets, consumer financial markets, and hiring processes. The Order also highlights the importance of guidance to ensure compliance with fair housing and credit laws and to combat discriminatory outcomes in real estate-related transactions. Overall, the Executive Order aims to promote equity and civil rights by addressing algorithmic bias and discrimination in various sectors impacted by AI.

How WRITER proactively mitigates AI bias and ethical concerns

WRITER addresses bias and ethical concerns in alignment with the Executive Order through meticulous data cleaning and preprocessing, oversight and annotation guidelines, model auditing, fine-tuning with human feedback, a user feedback loop, and adapting to different contexts and languages.

- Data cleaning and preprocessing: WRITER meticulously curates and preprocesses training data to remove sensitive content and flag potential bias hotspots.

- Oversight and annotation guidelines: Human annotators follow strict rules to avoid introducing bias when labeling the data.

- Model auditing: Rigorous audits are conducted to assess the model’s propensity for biased or unsafe content, ensuring objectivity.

- Fine-tuning with human feedback: The model is adjusted based on audit results using techniques like Reinforcement Learning from Human Feedback (RLHF).

- User feedback loop: WRITER actively engages with its user community to address and fine-tune the model based on reports of biased outputs.

- Scrutiny of training data: WRITER adds layers of scrutiny and control to mitigate biases inherited from the training data.

- Context-aware fine-tuning: Additional features are incorporated into the model’s architecture to understand specific contexts and provide nuanced responses.

- Language-specific models: WRITER develops language-specific versions of its models, fine-tuned with data representative of linguistic and cultural idiosyncrasies.

- Annotator teams: Annotators from diverse cultural backgrounds contribute to creating a model that avoids favoring any particular group.

Resources for understanding and mitigating AI bias and ethical concerns

Tackling AI bias: a guide to fairness, accountability, and responsibility

By prioritizing fairness, transparency, and diversity in AI systems, conducting audits, and collaborating with stakeholders, you can mitigate biases, promote inclusivity, and ensure equitable outcomes. Implementing these strategies will empower enterprise leaders to drive positive change and uphold the principles of equity and civil rights in their AI practices.

The importance of AI ethics in business: a beginner’s guide

This post provides enterprise leaders with valuable insights on the importance of AI ethics and how to establish ethical practices in their organizations. By understanding the legal and ethical implications of AI, setting clear principles and guidelines, and implementing governance structures, you can ensure the responsible use of AI technology. This will help advance equity and civil rights by protecting vulnerable populations, reassuring privacy concerns, reducing legal risks, improving public perception, and gaining a competitive edge in the market.

Supporting and protecting workers

The Executive Order aims to support and protect workers in the context of generative AI by taking several actions. These include conducting reports on the labor-market effects of AI, analyzing the abilities of agencies to support workers displaced by AI, developing principles and best practices for employers to mitigate potential harms and maximize benefits to employees, prioritizing resources for AI-related education and workforce development, and addressing discrimination and biases in AI systems used for hiring and in housing and consumer financial markets.

How WRITER helps enterprise companies support and protect employees in the AI era

At WRITER, we’re dedicated to promoting people-first workplaces in the AI era. We understand the importance of prioritizing employee well-being, promoting diversity and inclusion, keeping lines of communication open, and creating an environment that encourages collaboration and creativity.

WRITER supports a “human-in-the-loop” approach to developing and implementing generative AI by advocating for the use of AI as a tool to enhance human capabilities rather than replace them. There’s no underestimating the importance of human expertise, creativity, and critical thinking in conjunction with AI technology to achieve optimal results.

Resources for supporting enterprise employees in the age of AI

Future-ready your people for the AI workplace

Get practical advice on adapting hiring practices, supporting existing employees, crafting a corporate AI policy, developing a generative AI adoption roadmap, and hiring an AI program director. By following these recommendations, you can create a people-first workplace that prioritizes employee well-being, promotes diversity and inclusion, and ensures effective communication and collaboration in the face of AI transformation.

Prepping your organization for the White House Executive Order on AI

It’ll take time to turn the Biden-Harris Executive Order on AI into legislative action, but that doesn’t mean you should wait to respond. Here are some actions enterprise leaders can take today and into the future to protect their organizations from being blindsided or slowed down by new regulations on AI.

Immediate actions

- Conduct an AI risk assessment. Initiate a comprehensive assessment of AI systems to identify potential risks concerning security, privacy, or ethics and develop strategies to mitigate those risks.

- Establish an AI governance committee. Form a dedicated committee or task force for overseeing AI development and deployment, ensuring cross-functional collaboration and decision-making.

- Review and update AI policies. Evaluate and update existing AI policies and guidelines to align with the principles outlined in the executive order, addressing safety, security, privacy, and ethical considerations.

- Enhance data privacy and security measures. Strengthen data privacy and security protocols to protect sensitive information used in AI systems, and ensure compliance with relevant data protection regulations.

- Invest in AI training and education. Allocate resources for AI training and education programs for employees to enhance AI literacy and awareness.

- Engage with government initiatives. Stay informed about government-led initiatives, guidelines, and regulations related to AI, and engage in consultations to shape responsible AI policies and standards.

Short to medium-term actions

- Foster partnerships and collaborations. Collaborate with other organizations, research institutions, and industry experts to share knowledge, exchange best practices, and drive responsible AI innovation.

- Promote a culture of responsible AI use. Encourage open discussions about AI’s impact, potential biases, and ethical considerations, fostering a sense of responsibility and accountability among employees working with AI systems.

- Foster an ethical AI culture. Promote a culture valuing ethical AI practices, encouraging open discussions about AI’s impact, potential biases, and ethical considerations.

Longer-term actions

- Innovate responsibly. Engage in research and development that advances responsible AI, supporting initiatives that promote fair competition and developing ethical AI technologies.

- International engagement and collaboration. Participate in international discussions and collaborations on AI standards, safety, and responsible use, fostering global partnerships.

- Continuous engagement and feedback. Maintain a continuous dialogue with industry associations, governmental bodies, and other stakeholders to contribute to the ongoing discussions on AI governance.

WRITER is built on a foundation of safety, trust, and innovation for the enterprise environment

WRITER is fully committed to supporting the principles and priorities outlined in the White House Executive Order. We encourage companies to collaborate with us to ensure their compliance with regulations and responsible AI use. Together, we can foster a culture of trust, accountability, and responsible innovation in the AI industry.

Contact our sales team to learn more about how we can support your business transformation.